The most important thing in 30 seconds

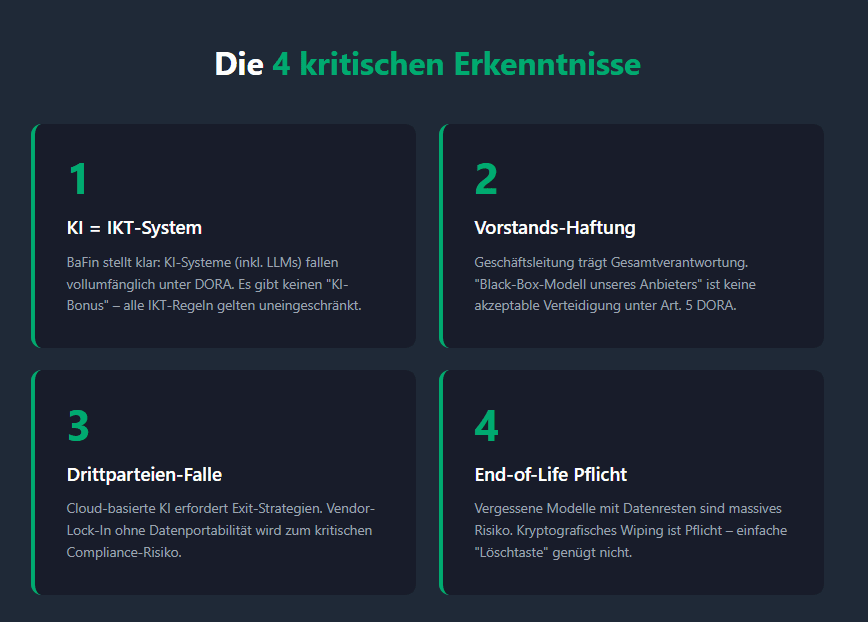

- AI is “just” software:BaFin clarifies that AI systems (including LLMs) are treated as ICT systems according to DORA. There is no “AI bonus” – the DORA rules on risk management and governance apply without restriction.

- Liability of management:The board has overall responsibility. Ignorance of how the AI models used does not protect against regulatory consequences (Art. 5 DORA).

- Third-party risk as an Achilles heel:With most financial companies sourcing AI via cloud/API, ICT third-party risk management becomes a critical bottleneck. Exit strategies for LLMs are mandatory.

- Life cycle trap:Security must be guaranteed from data acquisition to decommissioning (end-of-life). Leftover data in “forgotten” models is a massive compliance risk.

The paradigm shift: From “Innovation Lab” to “Regulatory Duty”

Many financial companies treat Generative AI (GenAI) as an isolated innovation project.BaFin's guidance published on December 18, 2025ends this phase.

The core message is simple:

AI systems are ICT systems.

This means that AI applications fall entirely under theDigital Operational Resilience Act (DORA). For decision-makers this means: The use of AI is a central topic of operational resilience. Anyone who uses AI without adapting ICT governance is now operating blindly from a regulatory perspective.

What makes this guide so explosive is not what it says, but what it implies:There is no grace period for experimental AI implementations in core banking or insurance businesses.

Governance & Organization: The Board's responsibility

BaFin explicitly calls for an AI strategy that is integrated into the business strategy.

- Strategic implication:The governing body must have “sufficient knowledge” to assess AI risks.

- The risk:If an AI model hallucinates and this results in financial harm, regulators will ask: “Did you understand and approve how it works?” An answer like “That was a black box model from our provider” is not accepted under DORA.

- Action item:Implement acompliance dashboardfor AI (as recommended in the BaFin case study) that centrally records model versions, risk assessments and responsibilities. Transparency is your insurance

ICT third-party risk: The dependency trap

The reality in the financial sector is clear:Hardly any institute develops its own foundation models “on-premise”. You use APIs from hyperscalers or specialized SaaS solutions.

This is where BaFin intervenes deeply with its guidance. When using cloud-based AI services, the strict rules of the RTS (Regulatory Technical Standards) for subcontracting apply.

Critical points for decision-makers:

- Vendor lock-in:You must have an exit strategy. What happens if your US cloud provider stops service or increases prices tenfold? Can you export your training data and configurations?

- Shadow IT:Employees often use unauthorized AI tools. This bypasses all security checks. BaFin expects technical measures (e.g. web proxies, DLP) to prevent data leaks.

- Model Drift & Updates:With cloud models, the provider often changes the model in the background. An update out of your control can change the basis for your lending decisions overnight.

Cyber Security: Understanding New Attack Vectors

The guidance highlights specific attack vectors that do not exist in classic ICT systems. It's about the integrity of the logic.

The three big dangers:

- Data Poisoning:Attackers manipulate training data to subtly control AI behavior.

- Adversarial Attacks / Prompt Injection:Targeted entries that undermine AI security mechanisms (e.g. to extract confidential customer data).

- Model inversion:Back-calculation of the training data from the model output, which results in data protection violations (GDPR).

“Security by Design” is not optional. For critical functions, BaFin recommends isolated environments (containerization) and strict “human-in-the-loop” processes, especially for automated decisions.

The Life Cycle: The Forgotten Risk of “Decommissioning”

An often overlooked aspect that BaFin strongly emphasizes is end-of-life management.

What happens to an AI assistant when it is turned off?Historical prompts, training data and model weights often contain highly sensitive information.

The danger:If old models are not securely wiped (cryptographic wiping), they can be leaked years later and reveal trade secrets. A simple “delete button” is often not enough, especially in complex cloud environments.

Conclusion: act instead of react

Although the BaFin guidance is legally “non-binding”, it defines the benchmark against which auditors will measure compliance with DORA. Anyone who ignores these instructions risks sensitive findings during the next test.

Strategic recommendations for action for C-Level:

- Audit of the AI landscape:Identify all AI systems (including shadow IT) and classify them according to criticality.

- DORA integration:Don’t build parallel “AI governance.” Seamlessly integrate AI risks into your existing ICT risk management framework (Article 6 DORA).

- Competence building:Don't just train IT,but above all the departments and management. Risk awareness of “hallucinations” and “bias” must become part of the corporate culture.

The market doesn't wait.The institutes that now see AI governance as an enabler for secure innovation will secure a massive competitive advantage!

Next step: Free initial consultation

Would you like to implement DORA compliance in a timely manner? Our experts will be happy to advise you - without obligation and in a practical manner.Arrange an initial consultation now →

Next step: Free initial consultation

📖 Also read:TLPT under DORA - Is your company ready for a live cyberattack under regulator supervision?

📖 Also read:AI in finance: From black box risk to audit-proof strategic asset

📖 Also read:TLPT under DORA - Is your company ready for a live cyberattack under regulator supervision?

📖 Also read:AI in finance: From black box risk to audit-proof strategic asset

Would you like to implement DORA compliance in a timely manner? Our experts will be happy to advise you - without obligation and in a practical manner.Arrange an initial consultation now →

Related articles

Continue exploring with related insights from our experts.

Cyber Insurance: Requirements, Costs, and Selection Guide for Businesses 2026

Cyber insurance covers financial losses from cyberattacks, data breaches, and IT outages. This guide explains what insurers require in 2026, coverage types, costs by company size, and how to choose the right policy — including how ISO 27001 certification reduces premiums.

Vulnerability Management: The Complete Lifecycle for Finding, Prioritizing, and Remediating Weaknesses

Over 30,000 CVEs are published annually. Effective vulnerability management prioritizes what matters most to your organization and remediates before attackers exploit. This guide covers the full lifecycle: discovery, scanning, risk-based prioritization, remediation, and compliance.

Security Awareness Training: Building Effective Programs and Measuring Impact

The human layer remains the weakest link in cybersecurity. This guide covers how to build an effective security awareness program, run phishing simulations, design role-based training, and measure whether your program actually reduces risk — with benchmarks and KPIs.