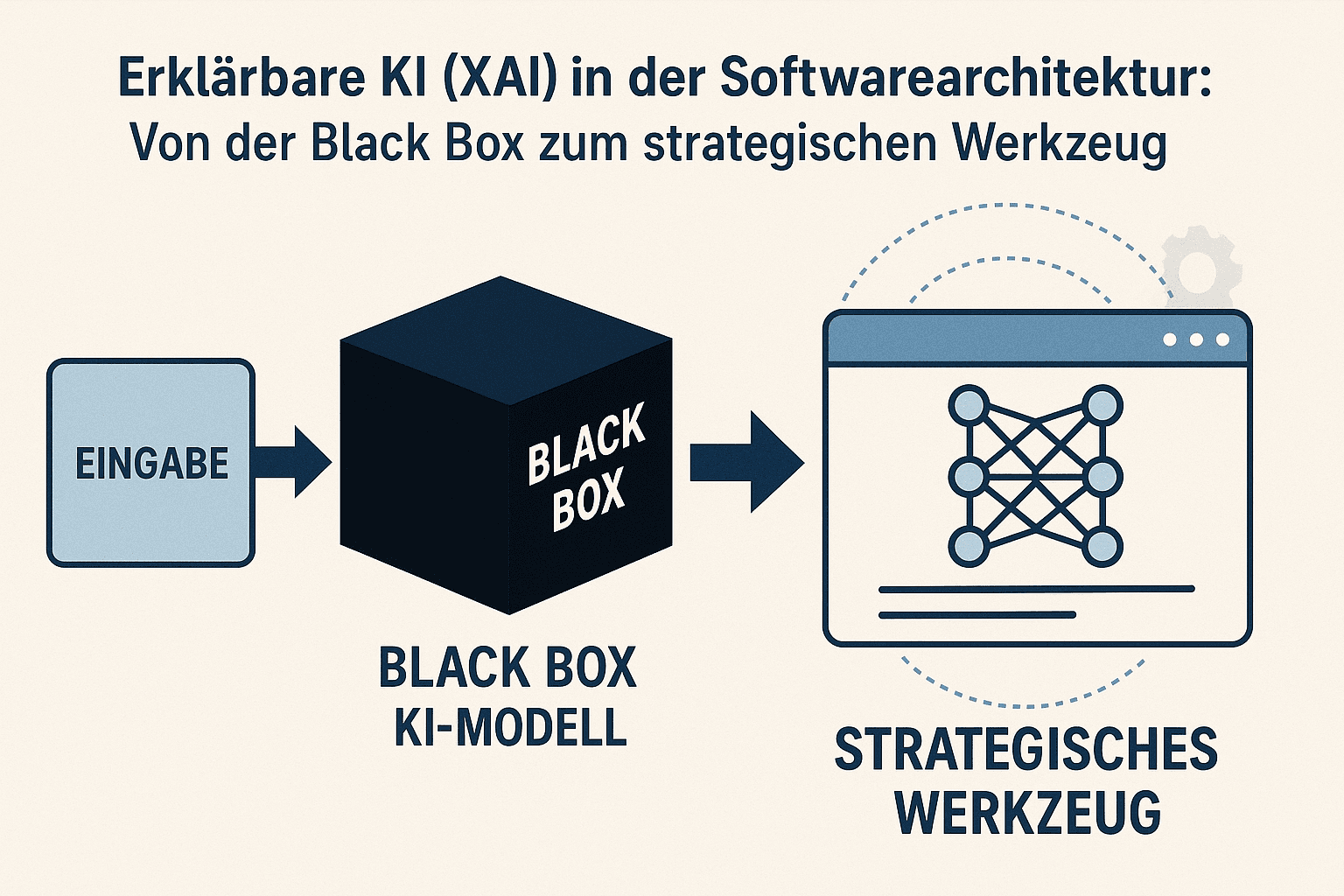

Explainable AI (XAI) in software architecture: From black box to strategic tool

The blind spot of modern AI systems: Why explainable AI (XAI) is indispensable

Intelligent systems deliver impressive results, but they often operate as an opaque “black box”. They give an answer but refuse to give reasons. This lack of transparency is becoming an unacceptable business risk for companies. When a system makes a critical decision—be it in lending, medical diagnostics, or predictive maintenance—and no one can understand the logic behind it, trust erodes and opens the door to serious errors.

Explainable AI(Explainable AI, XAI) comes into play here: it ensures that AI decisions become understandable and transparent. This article highlights how to anchor explainable AI not as an afterthought, but as a central pillar of your software architecture. We'll show you how to use explainable AI to create targeted transparency to build trust, meet regulatory requirements and secure the value of your AI investments.

Detailed analysis

The “black box” as a business risk: Why traceability is becoming indispensable

An AI model whose decision-making processes remain hidden is a latent danger. False predictions can go undetected and lead to flawed business strategies. Biased recommendations based on inappropriate training can unnoticed lead to discriminatory or unfair results and expose your company to legal and ethical conflicts.

The demand for transparency comes not only from internal stakeholders who need to ensure the reliability of the systems. Regulatory authorities are also increasingly demanding clear documentation and justification for automated decisions. An architecture that ignores this traceability from the start creates technical debt that can only be remedied later with considerable effort.

A system that cannot explainwhyit has made a particular choice is uncontrollable from a business perspective.It acts like a highly qualified employee who cannot justify his recommendations - an unacceptable situation for any manager.

Methods of Explainability: An Architectural View

To create transparency, architects have two basic paths that require deep design decisions.

1. Intrinsically transparent models:These models are inherently understandable. These include simpler structures such as linear models or decision trees. Your logic can be read directly. The architectural compromise here often lies in performance; Their predictive quality may not be sufficient for highly complex problems. Its strength lies in applications where crystal-clear and direct traceability is the top priority.

2. Post hoc explanation approaches:These methods are applied to complex, opaque models (e.g. deep neural networks) to subsequently interpret their behavior. Approaches such as LIME (Local Interpretable Model-agnostic Explanations) or SHAP (SHapley Additive exPlanations) act as “translators”. They analyze a specific decision and highlight which input factors had the greatest impact on the outcome. Architecturally, this means implementing an additional service or layer that generates these explanations on demand.

ApproachArchitectural implicationIdeal usage scenarioIntrinsic transparencyselecting simpler model types; the explanation is part of the model itself. Regulatory sensitive areas where every decision must be fully justified.Post hoc explanationsIntegration of a separate explanation service; requires additional computing power.High-performance systems where complex models are essential, but individual decisions must be analyzed.

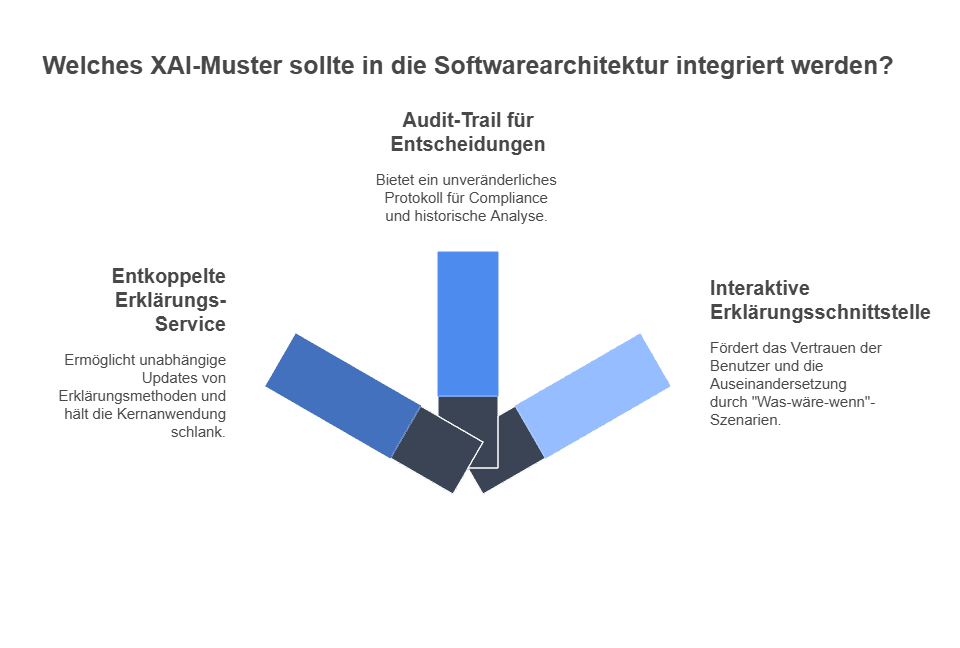

Anchoring XAI in the software architecture: Concrete patterns

Integrating XAI is not a task for data scientists alone. It must be thought through by architects and anchored in the system design.

- Pattern 1: The decoupled explanation serviceImplement explanation generation logic as a standalone microservice. Your prediction service delivers the result, and when necessary, an application calls the explain service with the prediction's ID. This then provides a structured justification. This keeps your core application lean and allows you to update explanation methods independently of the prediction model.

- Pattern 2: The Audit Trail for DecisionsStore not only the outcome of an AI decision, but also the explanation behind it in an immutable log. This audit trail is invaluable for troubleshooting, maintaining compliance, and analyzing system behavior over time. It answers the question "Why did the system make this decision three months ago?".

- Pattern 3: The interactive explanation interfaceOffer end users a user interface that not only displays the result, but also a visual or textual presentation of the reasoning. Allow users to explore how the decision would change if inputs changed through “what if” scenarios. This creates deep trust and promotes active engagement with the system.

Strategic Implications & Key Insights

- From model performance to system trustworthiness:The focus shifts. Instead of just evaluating the accuracy of a model, leaders need to focus on the trustworthiness of the entire system. Explainability is the key to this trustworthiness.

- XAI as a differentiating feature:Companies that provide their AI-supported products and services with transparent justifications clearly stand out from the competition. They not only offer their customers a solution, but also the certainty and control that are crucial for long-term partnerships.

- Collaboration as a success factor:Implementing XAI requires close collaboration between software architects, data scientists and subject matter experts. Architects must provide the infrastructure for explainability, while data scientists select the appropriate methods and subject matter experts validate the plausibility of explanations.

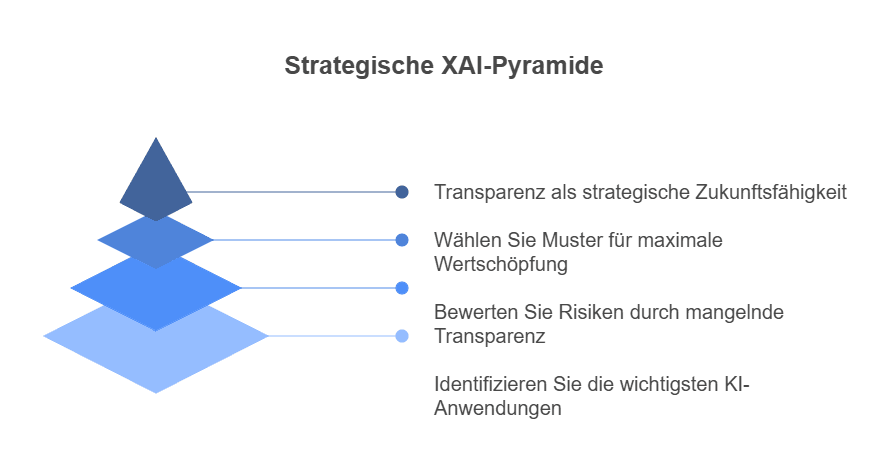

Recommendation for action & outlook

Don't start by asking which XAI method is best. Start by identifying your most critical AI applications.

Assess where a lack of transparency poses the greatest business risk. Is it compliance with regulatory requirements, user acceptance or the risk of costly wrong decisions? Your answer to this question will determine which architectural patterns and explanation methods create the most value for your organization. The conscious design of transparent systems is not a technical detail - it is a strategic decision for the future viability of your company.

📖 Also read:AI software architecture: manage risks & secure strategic advantages

📖 Also read:AI software architecture: manage risks & secure strategic advantages

Related articles

Continue exploring with related insights from our experts.

Generative AI in the enterprise: From pilot to production-scale rollout

How to take generative AI from pilot to production-scale rollout: the three deployment patterns, five proven use-case archetypes, the compliance layer aligned with EU AI Act and OWASP LLM Top 10 (2025), realistic cost math, and the operating model most pilots never build.

Building an AI roadmap: The 4-phase method for enterprise AI transformation

Build an AI roadmap in four phases: potential assessment, use-case selection, pilot, scale. With a 12-18 month timeline, scoring matrix, pitfall taxonomy, EU AI Act and ISO 42001 embedding, and an executive FAQ.

What are the 4 Types of AI? The Complete Guide

The 4 types of AI per Arend Hintze (2016): Reactive Machines, Limited Memory, Theory of Mind, and Self-Aware AI. With examples, EU AI Act mapping and what this means for today's enterprise AI use.