Overnight Supply Chain Risk: What the Anthropic Ban Means for Your Business

Three scenarios. All real since February 27, 2026:

Scenario 1: The bank.You are a German private bank. You use Anthropics Claude for your credit risk analysis and your customer service bot. DORA requires you to have a documented exit strategy for each critical ICT service provider. They don't have any - because Claude has been running reliably so far.And even if you have one, if your entire AI infrastructure is built on a single provider, you're now faced with an unplanned migration project. Evaluating new models, rewriting prompts, adapting interfaces, retraining hundreds of employees - that's six months of emergency. A paper exit strategy does not protect you from the operational reality of a vendor lock-in.

The US government is now classifying Anthropic as a “supply chain risk to national security”. Your US correspondent banking partner asks: “Do you use Anthropic technology?” The answer decides the business relationship.

Scenario 2: The medium-sized company.They produce turbine parts. Or packaging material. Or special screws. Your largest customer supplies a US defense company. You yourself have nothing to do with the Pentagon — but you use Claude internally for your process automation. As of February 27th, no company that works with the US military is allowed to have business relationships with Anthropic users. Your customer calls: “Are you using Anthropic? Then we have a problem.”

Scenario 3: The insurer.They are an insurance company, DORA regulated. You need to check your entire supply chain for IT security risks. Suddenly one of your software suppliers is a supply chain risk — not because they were hacked, but because they use AI models from a now blacklisted provider.

All three scenarios have become reality since yesterday.

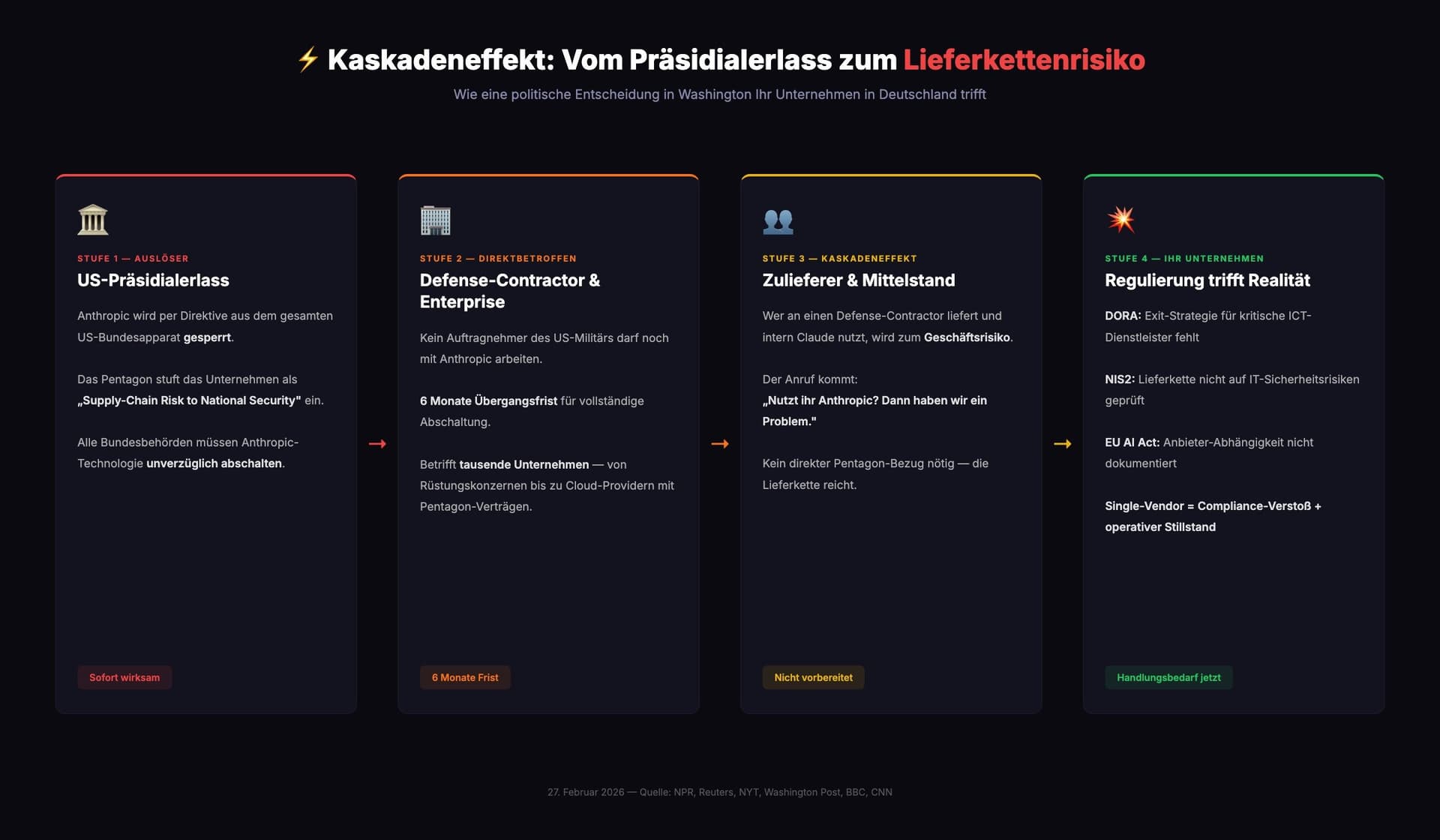

The US government has banned Anthropic - developer of Claude, one of the most used AI models in the world - from the entire federal apparatus by executive order. The Pentagon classified the company as a “supply chain risk to national security.” No contractor, no supplier, no partner of the US military is still allowed to work with Anthropic. That same evening, competitor OpenAI signed a Pentagon contract.

And that is the crucial point:The cascade doesn't stop at the Pentagon.It runs through the entire supply chain — from the defense contractor to the Tier 2 supplier to the German medium-sized company that simply produces a good product and uses AI internally. Anyone using Anthropic technology now poses a risk to any business partner who has direct or indirect dealings with the US defense sector.

This affects more companies than you think. And next time it might not be Anthropic — but OpenAI, Google or a European provider.

What exactly happened

The escalation began with a demand from the US Department of Defense: unrestricted access to Anthropic's AI models for all “lawful purposes” in military use. Anthropic CEO Dario Amodei refused - citing its own responsible use policy.

The answer came quickly and harshly:

- President Trumpissued a directive ordering all U.S. federal agencies to “immediately cease” the use of Anthropic technology.

- Secretary of Defense Pete Hegsethdeclared Anthropic “Supply-Chain Risk to National Security” — a classification normally reserved for companies from hostile states

- All U.S. military contractors and suppliersare immediately excluded from business relationships with Anthropic

- Transition period:six months, then complete shutdown

NPR, Reuters, New York Times, Washington Post, BBC and CNN report unanimously. Anthropic has announced that it will challenge the classification in court.

Why this is not a US problem

It is tempting to dismiss this development as American domestic politics. That would be a mistake.

European companies use the same providers.Anthropic (Claude), OpenAI (GPT), Google (Gemini) and Meta (LLaMA) dominate the market. Anyone who has built their business processes on one of these models bears exactly the same concentration risk.

- Regulation:The EU AI Act comes into force gradually. What happens if your model provider does not meet the requirements?

- Geopolitics:Export controls, sanctions, data localization requirements.

- Business decisions:Price increases, API changes, model discontinuation. OpenAI has changed its pricing structure three times in 2025 alone.

- Compliance conflicts:NIS2 requires a documented supply chain analysis. DORA calls for exit strategies. If you only have one AI provider, you have a cluster risk.

This is particularly explosive for banks, insurance companies and companies in the defense supply chain. A provider outage is not an IT problem here — it is a compliance violation.

And the middle class is hit the hardest.Not because the risk is greater - but because the resources for a quick migration are lacking. A major bank has a team that can rebuild the AI stack in weeks. Ein Mittelständler mit 80 Mitarbeitern hat das nicht. He is faced with a choice: weeks of development work or losing the customer.

The pattern is reinforced by regulation: NIS2 requires affected companies to check their entire supply chain for IT security risks. DORA demands the same for the financial sector. If your client falls under these regulations, the requirements will be passed on to you as a supplier. You don't have to be self-regulated to be affected. It's enough if your customer is.

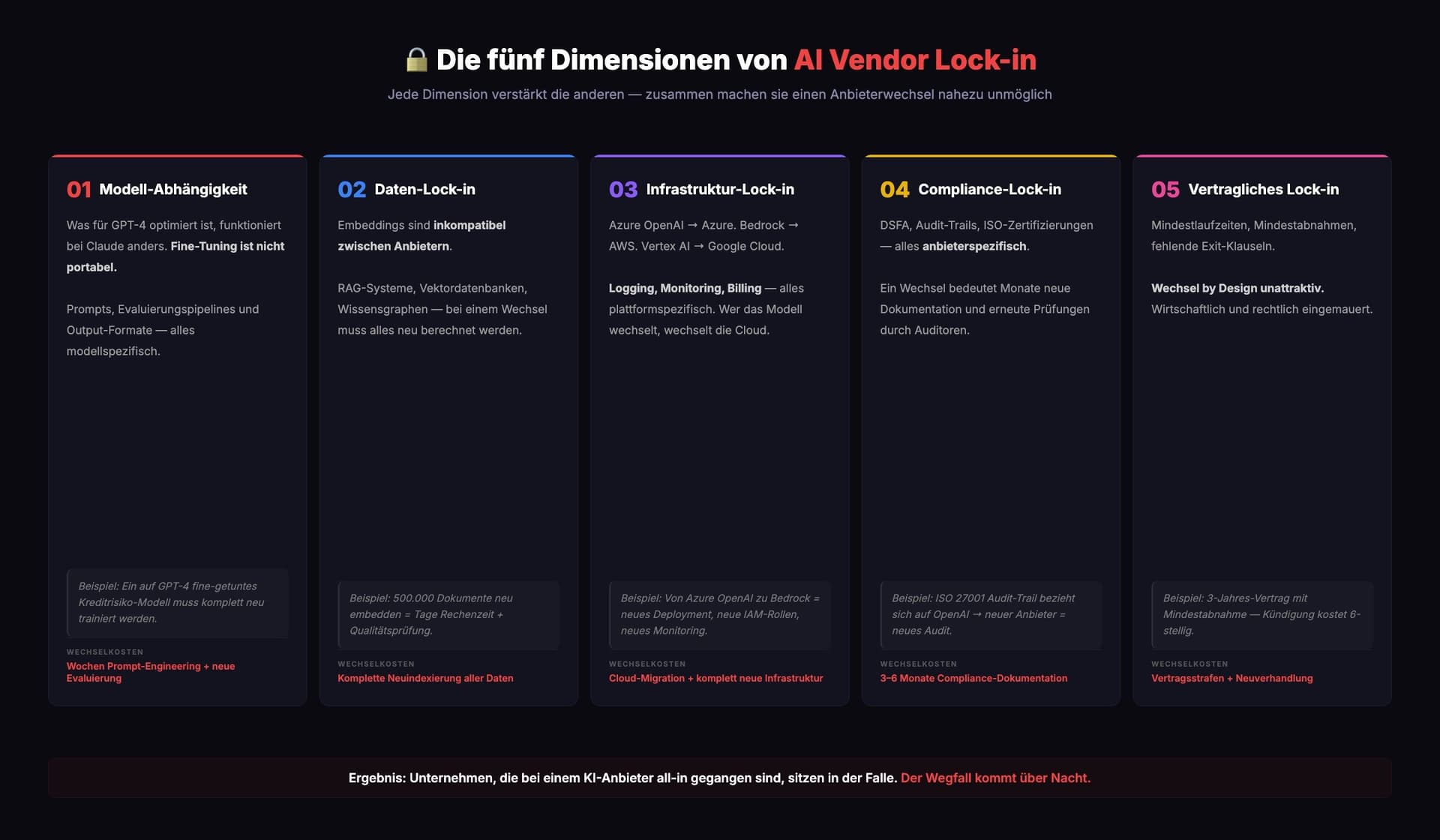

The five dimensions of AI vendor lock-in

AI vendor lock-in is more complex than classic cloud lock-in. Five dependencies reinforce each other:

1. Model dependency

Prompts optimized for GPT-4 work differently on Claude. Fine-tuning is not portable.

2. Data lock-in

Embeddings are incompatible between providers. If you change, everything has to be recalculated.

3. Infrastructure lock-in

Azure OpenAI binds to Azure. Bedrock to AWS. Vertex AI to Google Cloud. Logging, monitoring, billing — all platform-specific.

4. Compliance lock-in

Certifications, audit trails and DPIAs refer to a specific provider. Change of provider = months of new documentation.

5. Contractual lock-in

Minimum terms, minimum purchases, missing exit clauses. Economically unattractive change by design.

The result:Companies that have gone all-in with a single AI provider are trapped. The loss comes overnight.

Multi-model is not a nice-to-have — it is mandatory

The lesson from February 27, 2026 is clear: companies need an AI infrastructure that works regardless of the manufacturer. Not as a theoretical option, but as an operational reality.

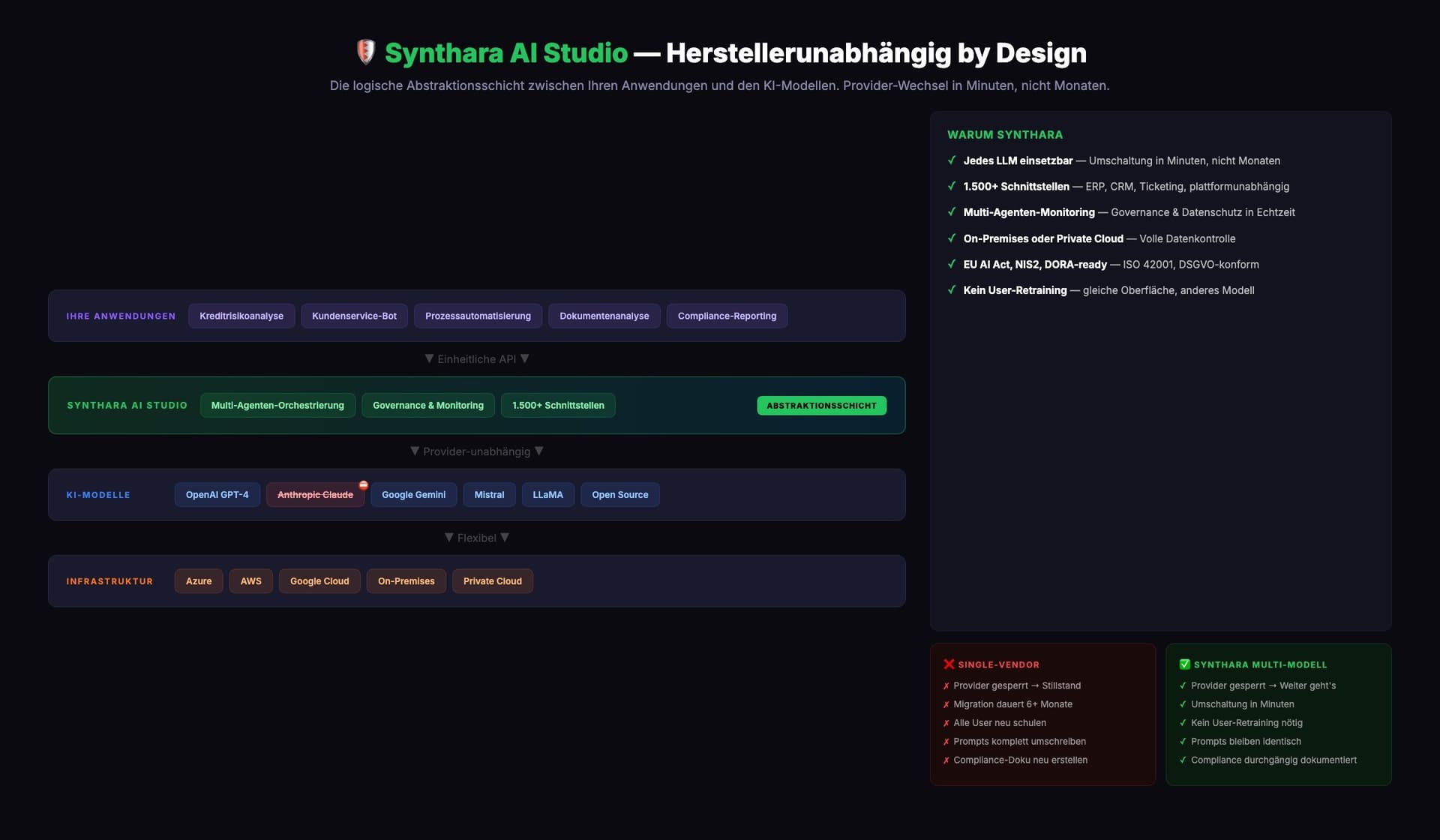

Synthara AI Studio: Vendor unlocked by Design

Synthara is onemanufacturer-independent multi-agent platform— built for companies that cannot afford lock-in:

- Any LLM can be used:OpenAI, Anthropic, Google, Mistral, LLaMA, Open Source. Switchover in minutes.

- 1,500+ interfaces:Integration into ERP, CRM, ticketing - platform independent.

- Multi-agent monitoring:Governance, data protection, compliance in real time.

- On-premise or private cloud:Full data control. No data leakage.

- EU AI Act, NIS2, DORA ready:ISO/IEC 42001, GDPR compliant, built-in compliance reporting.

This is the difference between an AI solution and an AI strategy.

Checklist: How to protect your company from AI vendor lock-in

Every company that uses AI productively should implement these seven points:

- 1. Add abstraction layer —Never speak directly to provider APIs.

- 2. Establish multi-model testing —Test each use case on at least two models.

- 3. Negotiate exit clauses —Including data portability and transition periods.

- 4. Keep embeddings portable —Maintain open formats or recalculation capacity.

- 5. Maintain compliance documentation regardless of provider —Process related, not provider related.

- 6. Formally assess supply chain risk —NIS2 and DORA require this anyway.

- 7. Regular alternating exercises —Simulate failure of the primary AI provider.

Conclusion: AI sovereignty is a matter for the boss

Anthropic is an excellent company. What's up for debate: Whether it's wise to base your AI strategy on a single provider - no matter how good it is.

Synthara AI Studio is the only multi-agent platform in the DACH region that combines complete vendor independence with enterprise-grade compliance.No vendor lock-in. No single point of failure. Complete sovereignty.

Anyone who protects themselves today will have a competitive advantage tomorrow. Anyone who waits will have a problem tomorrow - and no plan B.

ADVISORI supports companies with AI transformation, information security and regulatory compliance.Contact usfor a non-binding consultation.

Related articles

Continue exploring with related insights from our experts.

Generative AI in the enterprise: From pilot to production-scale rollout

How to take generative AI from pilot to production-scale rollout: the three deployment patterns, five proven use-case archetypes, the compliance layer aligned with EU AI Act and OWASP LLM Top 10 (2025), realistic cost math, and the operating model most pilots never build.

Building an AI roadmap: The 4-phase method for enterprise AI transformation

Build an AI roadmap in four phases: potential assessment, use-case selection, pilot, scale. With a 12-18 month timeline, scoring matrix, pitfall taxonomy, EU AI Act and ISO 42001 embedding, and an executive FAQ.

What are the 4 Types of AI? The Complete Guide

The 4 types of AI per Arend Hintze (2016): Reactive Machines, Limited Memory, Theory of Mind, and Self-Aware AI. With examples, EU AI Act mapping and what this means for today's enterprise AI use.