Security concept for autonomous AI agents: Use specialized security agents as monitoring instances

AI agents are the next level of generative AI and are increasingly being used in companies. But who ensures that they adhere to compliance and security guidelines? The solution: specialized upstream security agents.

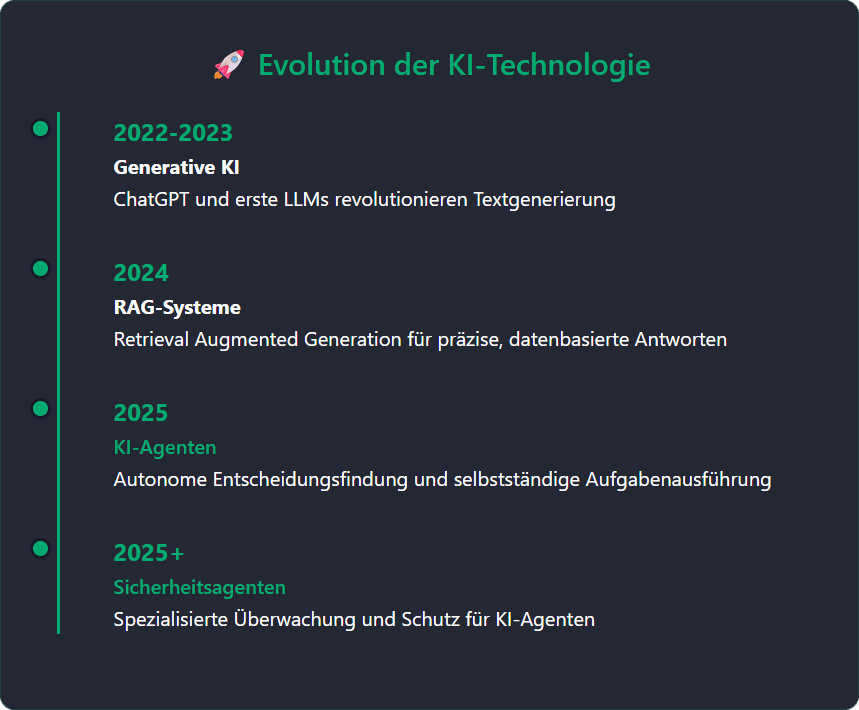

The year 2025 marks the transition from generative AI and RAGs (Retrieval Augmented Generation) to AI agents.

RAGs generate precise answers by accessing specific data sources. AI agents are also able to make decisions independently and carry out tasks on behalf of a user or a system. There are already countless AI agents who independently book flights and hotels, make calendar entries, plan appointments, advise customers on the hotline or check invoices, to name just a few examples.

Who ensures the safety of AI agents?

AI agents offer enormous innovation potential. But they also raise the central question: who monitors their security? How to ensure that only authorized people have access to AI agents? Who guarantees that they do not violate security guidelines and compliance requirements?

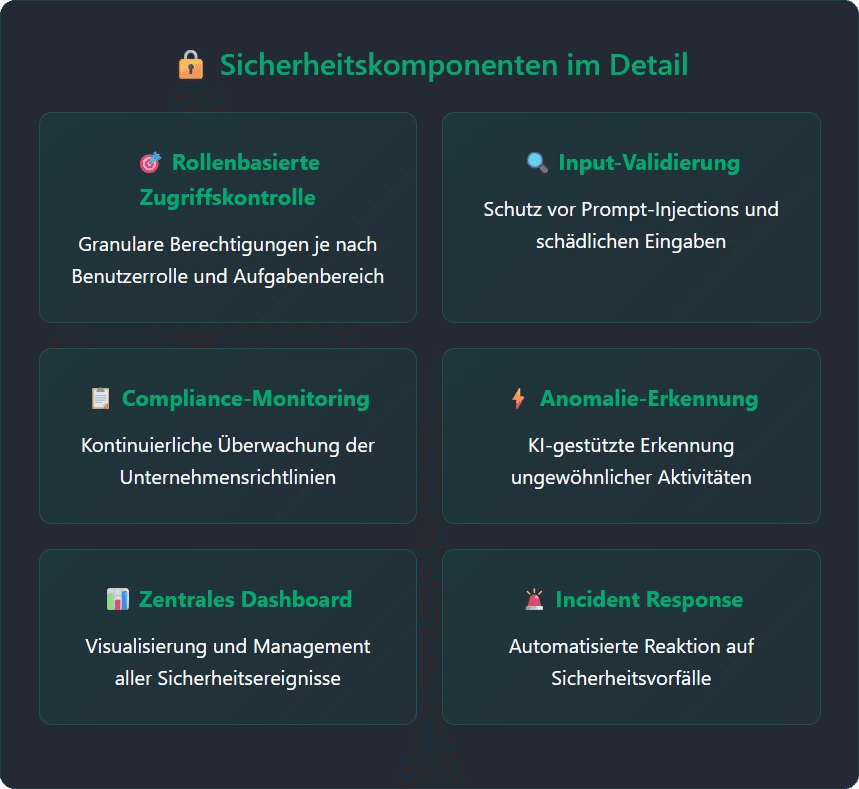

The solution: specialized security agents, which act as monitoring instances within the agent architecture. They also protect against threats such as prompt injections, data leaks and other attacks. A central dashboard supports the IT team in monitoring security agents by visualizing security-critical events, highlighting anomalies and enabling targeted interventions - including concrete recommendations for action.

How do security agents work?

The architecture relies on a combination of static and dynamic scanners. Not a single security agent performs all security tasks of all AI agents. That would be inefficient and slow. Rather, a network of specialized, tailor-made security agents specifically checks various aspects, which is also individually adapted to each agent. Instead of using a large, resource-intensive language model (e.g. DeepSeek or OpenAI o1), lightweight, optimized models are the solution. Complex reasoning processes are no longer required, which can lead to delays in the workflow. For example, the following security aspects would be relevant for an AI agent that books flights independently:

- Role-based access control

- Input validation to protect against prompt injections

- Company-specific booking policies

This security framework developed by Advisori FTC enables companies to efficiently secure themselves when using AI agents. At the same time, it supports IT teams in continuously monitoring agent performance.

This means companies can invest in this future technology with confidence.

Contact

ADVISORI FTC GmbHinfo@advisori.deTel. +49 69 91311301https://www.advisori.de

Related articles

Continue exploring with related insights from our experts.

Generative AI in the enterprise: From pilot to production-scale rollout

How to take generative AI from pilot to production-scale rollout: the three deployment patterns, five proven use-case archetypes, the compliance layer aligned with EU AI Act and OWASP LLM Top 10 (2025), realistic cost math, and the operating model most pilots never build.

Building an AI roadmap: The 4-phase method for enterprise AI transformation

Build an AI roadmap in four phases: potential assessment, use-case selection, pilot, scale. With a 12-18 month timeline, scoring matrix, pitfall taxonomy, EU AI Act and ISO 42001 embedding, and an executive FAQ.

What are the 4 Types of AI? The Complete Guide

The 4 types of AI per Arend Hintze (2016): Reactive Machines, Limited Memory, Theory of Mind, and Self-Aware AI. With examples, EU AI Act mapping and what this means for today's enterprise AI use.