EU AI Act in the Financial Sector: Anchoring AI in the Existing ICS – Instead of Building a Parallel World

Why the AI Act is primarily an AI-specific extension of the internal control system for banks – and not a complete governance restart.

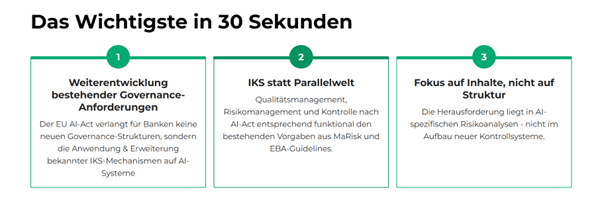

Key Takeaways:

- The EU AI Act does not require banks to create new basic corporate governance structures, but rather the AI-specific use and extension of the existing internal control system (ICS) for AI systems, especially for high-risk AI.

- Quality management, risk management, and control functions under the AI Act can be integrated into the established ICS architecture of banks (MaRisk, EBA Guidelines), instead of building a parallel control world.

- The essential adaptation requirement lies in the substantive supplementation of existing governance, risk, and control processes with AI-specific requirements – not in building a completely new control system.

The Supposed Upheaval: Why the AI Act Surprises Banks Less Than Expected

With the EU AI Act, a unified European legal framework for the use of AI is being created for the first time. In many banks, this step is initially perceived as a profound change – especially where AI has so far been seen more as an innovation topic or IT project. While the regulatory scope of the AI Act extends far beyond pure control and governance issues, this article deliberately focuses on the connection points to the internal control system (ICS) of banks.Upon closer examination, however, it becomes clear: For banks, the AI Act does not introduce a completely new governance cosmos, but rather specifies known supervisory principles and explicitly applies them to AI systems, especially high-risk AI systems. Its requirements can be structurally embedded in the existing internal control system (ICS) according to MaRisk and EBA Guidelines.

This shifts the perspective: The central question is less "Do we need new structures?" but rather "How do we cleanly anchor AI applications in the existing ICS – from the First Line to Internal Audit?".

Governance, Provider Role, and ICS: Who Bears the Responsibility?

The AI Act obliges providers of high-risk AI systems to establish a quality management system that ensures compliance with the regulation (Art. 17 Para. 1). From a banking perspective, this quality management system should not be understood as a parallel world to the existing ICS, but as an AI-specific design and supplement to the existing governance and control framework.

Central is first the question of when a bank is actually considered a provider of a high-risk AI system:A bank is considered a provider of a high-risk AI system if it develops a corresponding system for creditworthiness assessment itself (or through third parties) and deploys it under its own responsibility in the institution – regardless of whether it is marketed externally or only used internally. If the bank makes significant changes to an existing high-risk AI system that go beyond the original conformity assessment, it may also be considered a provider of the modified system and must meet the relevant requirements of the AI Act; particularly relevant here are the classification as a high-risk use case, the provider definition, the rules on role shifting for significant changes, the conformity assessment requirements, and the related recitals (especially Annex III No. 5 letter b, Art. 3 No. 3, Art. 8-16, Art. 25, Art. 43, Recitals 84 and 128 AI Act).

This directly links the governance question with the ICS: Where AI systems are professionally managed, approved, and modified, the ICS must structurally and procedurally reflect this responsibility.

Quality Management System vs. ICS: Supplement Instead of Dual Structure

The quality management system required under Art. 17 AI Act includes, among other things, compliance concept, development and testing processes, data management, risk management, post-market monitoring, and incident reporting. For banks with a mature ICS, the question is therefore not whether they need to build "a second system," but how they integrate the AI Act elements into their existing structures.

Art. 17 Para. 4 AI Act is the decisive connection point here: For financial institutions that already meet requirements for their internal governance and control arrangements due to European financial market regulation, the obligation to establish a quality management system – with the exception of requirements for risk management system (Art. 9), post-market monitoring (Art. 72), and reporting of serious incidents (Art. 73) – is considered fulfilled if the existing governance system complies with the relevant financial market regulations.

This allows the quality management system to be distinguished from the ICS as follows:

- The ICS forms the overarching framework of internal governance, responsibilities, processes, and controls (MaRisk AT 3, AT 4.3, AT 4.4; EBA Internal Governance).

- The AI Act quality management system specifies AI-specific rules and procedures within this framework across the entire lifecycle of high-risk AI systems (design, development, operation, monitoring, changes).

For banks, this means: The existing ICS remains the structural carrier; AI Act obligations are integrated into the ICS as AI-specific manifestations (policies, processes, controls, roles).

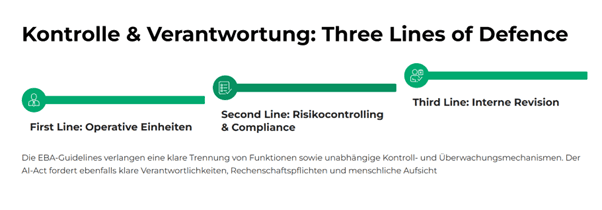

Three Lines of Defence: How the ICS Organizes AI Control

The EBA Guidelines Internal Governance require a clear separation of functions and the establishment of independent control functions; in practice, this is regularly implemented through the Three Lines of Defence model. This is exactly where the AI Act connects:

- Art. 17 requires clear responsibilities and an accountability framework for management and employees in dealing with high-risk AI systems.

- Art. 14 requires effective human oversight with real intervention capabilities, not just "reading along."

- Art. 11-13 require documentation, transparency, and traceability that can be used for audit and independent control.

These requirements can be functionally embedded in the existing ICS and its Three Lines logic:

- First Line (Business / Department) is professionally responsible for the AI use case, ongoing risk management, and compliance with requirements in operational business.

- Second Line (Risk Controlling, Compliance) sets AI policies, conducts independent risk analyses (including fundamental rights, bias, and transparency risks), and monitors implementation.

- Third Line (Internal Audit) reviews the design, implementation, and effectiveness of AI controls, including conformity with AI Act, MaRisk, and internal policies.

This creates not a parallel world, but an AI Act extension of the familiar ICS structure.

Risk Management and Documentation: Familiar Methods, New Content

Art. 9 AI Act obliges providers of high-risk AI systems to establish a continuous risk management system across the entire lifecycle of the system (identification, analysis, assessment, control, monitoring). Methodologically, this corresponds to the risk systematics from MaRisk (especially AT 4.3.2) and the EBA Guidelines; what is new is the substantive perspective:

- explicit focus on fundamental rights risks, discrimination, and biases,

- requirements for transparency, traceability, and human oversight,

- lifecycle-wide consideration with post-market monitoring and incident reporting.

Also in documentation and logging, the AI Act builds on familiar audit principles: MaRisk AT 6 already requires complete and traceable documentation of essential processes, systems, and decisions. The AI Act specifies this for AI systems by explicitly requiring technical documentation, model and data versioning, logging, and traceability of decisions for high-risk AI.

Thus, the AI Act does not structurally expand existing ICS obligations, but substantively adds AI-specific risk and documentation requirements.

Conclusion: ICS Evolution Instead of Regulatory Break

For banks, the EU AI Act is not a shock event, but a consistent specification and extension of existing supervisory requirements for the use of AI. The basic structures – governance, internal control system, Three Lines of Defence, risk management, documentation – are usually already in place.The decisive success factor lies in consistently integrating AI systems into the existing ICS and understanding the AI Act obligations (especially for high-risk AI) as AI-specific manifestations of these structures. Those who think of the AI Act as an integral part of the ICS avoid dual structures, maintain supervisory logic, and at the same time create transparency and clarity for all lines – from product development to risk management to Internal Audit.

Related articles

Continue exploring with related insights from our experts.

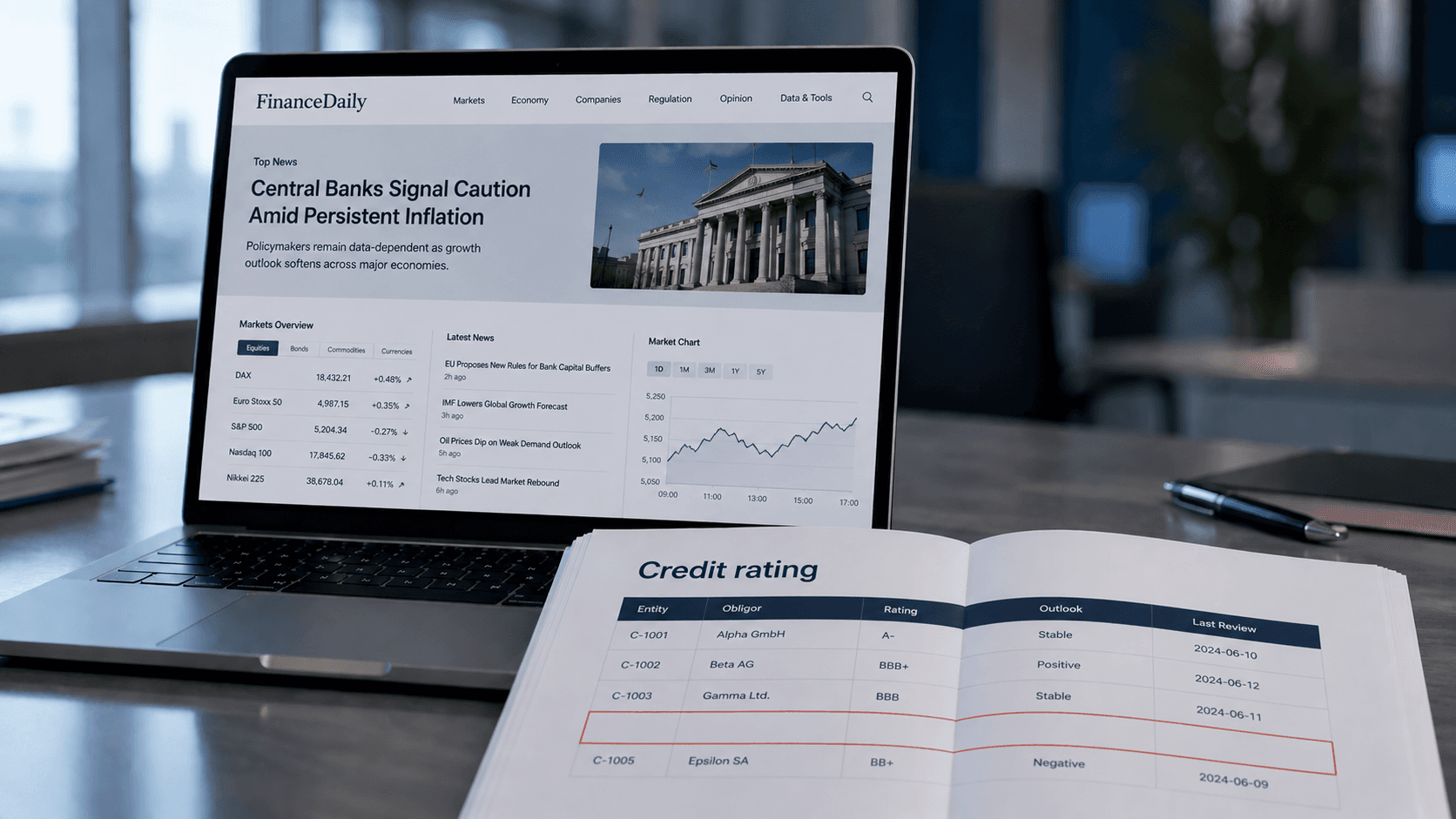

Credit Risk Modeling Trends 2026: Five Shifts Risk Managers Should Prepare For

The credit risk function of 2026 looks materially different from the one most banks still operate. Here are the five shifts, from generative AI to ESG integration, that risk managers should plan for now.

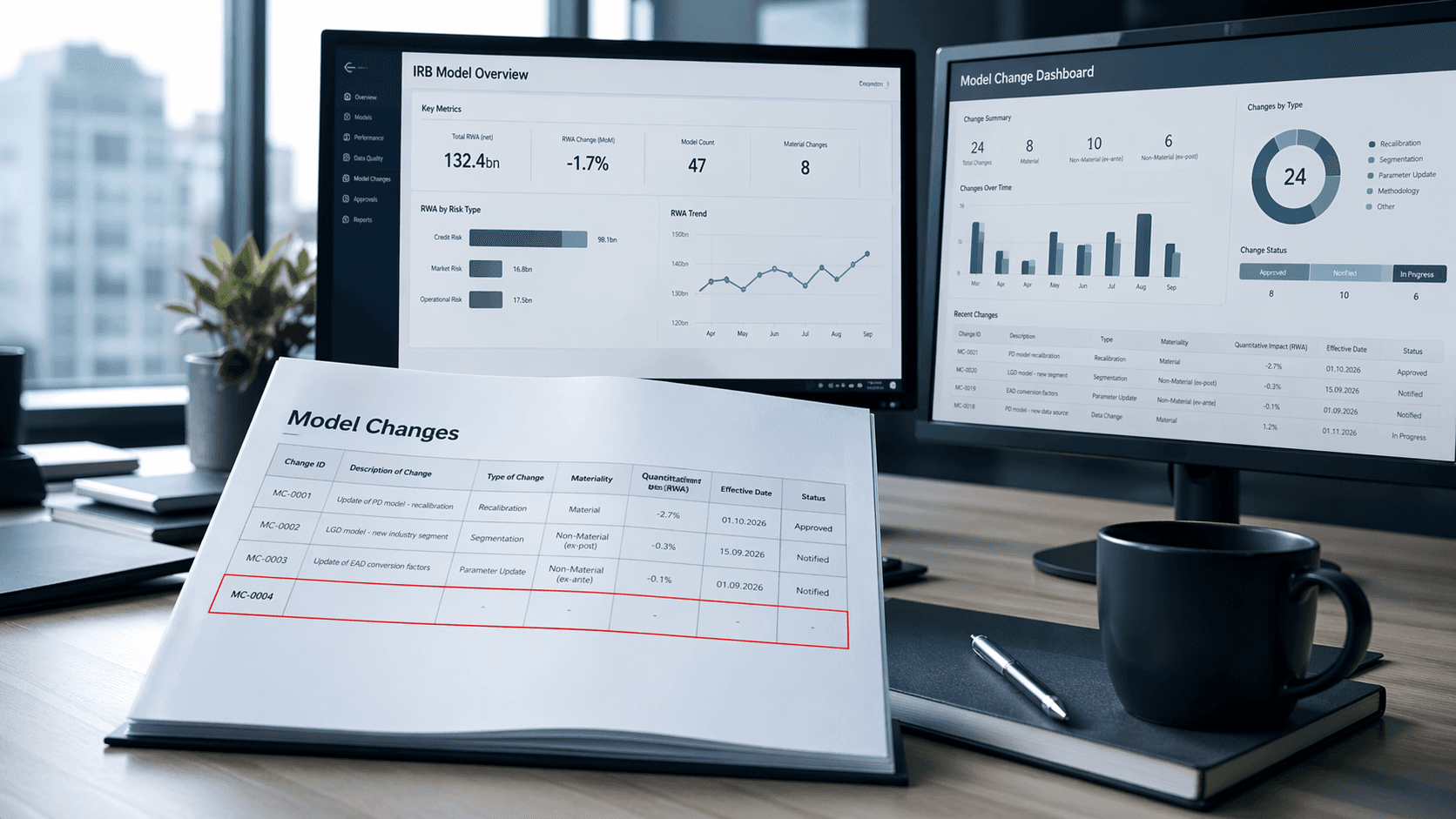

Less & Faster IRB Model Changes — What Actually Changed (and Why It Matters)

How the new IRB rules transform many previously time-consuming model changes into simple notifications—thereby drastically shortening approval times and significantly accelerating implementation

Cyber Insurance: Requirements, Costs, and Selection Guide for Businesses 2026

Cyber insurance covers financial losses from cyberattacks, data breaches, and IT outages. This guide explains what insurers require in 2026, coverage types, costs by company size, and how to choose the right policy — including how ISO 27001 certification reduces premiums.