Strategic AI governance in the financial sector: Implementation of the BSI test criteria catalog in practice

Strategic AI governance in the financial sector: Implementation of the BSI test criteria catalog in practice

Executive summary

- New EU regulations such as the AI Act require complete testing of so-called high-risk AI systems - the BSI is now offering a practical catalog of test criteria in this regard for the first time.

- Financial companies need onerisk-based, systematic AI governance– just individual measures are no longer enough.

- For everyone who is responsible for the use of AI in a financial company, there are clear fields of action in these areasTransparency, robustness, governance and fairness.

- Critical risks such asData poisoning, model manipulation and fairness distortionsshould be structurally identified and reduced.

- The Federal Office for Information Security (BSI) provides a testing framework with its new catalog of criteria.

Introduction: Why the future of AI is no longer a field of experimentation

AI is attractive to various areas of a financial institution to increase efficiency, from customer service to the back office. However, uncertainty regarding AI model risks is always an obstacle to development. Indeed, uncontrolled use of AI poses significant reputational, legal and operational risks. And the regulatory gray area for AI applications is narrowing.

With thatEU AI ActThe requirements for testing processes, traceability and risk management are increasing massively. The new oneTest Criteria CatalogBSI now provides a framework that can help financial institutions ensure both regulatory compliance and robust operations.

1. AI risks in the financial sector: Making the invisible threat visible

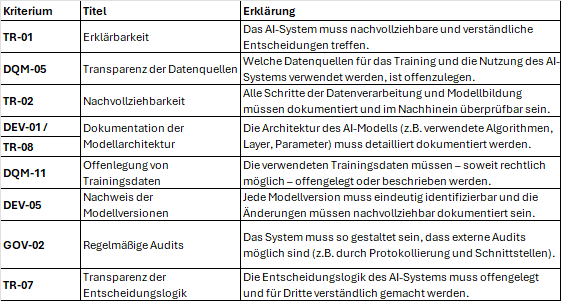

🔍 Transparency as a test of strategic controllability

It is often said that many AI systems operate as “black boxes”, and this is not entirely unjustified. But in a regulated environment like the financial industry, this is only partially acceptable. Accordingly, the BSI deals in its catalog with, among other things:

- Explainability for decision-relevant models (especially TR-01)

- Labeling AI-generated content for users

- Documentation of model decisions including feedback processes

🤙 Feedback processes: best practices

- Structured feedback forms:Instead of free text, predefined answer formats should be provided for classifying problems.

- Relevant feedback systems:Feedback must be systematically stored, analyzed and converted into model adaptation.

- Human-in-the-loop loops:Feedback must be checked by people at regular intervals.

Strategic benefit:Only transparency will allow AI systems to be used in critical areas of application in such a way that this is accepted by stakeholders.

2. Fairness: Discrimination is not just a reputational risk

⚖️ Fairness is not an ethical add-on, but rather a regulatory must

The catalog systematically requires companies to:

- Identify sensitive characteristics (e.g. gender, origin)

- Measure distortions in output that can disadvantage customer groups

- to implement distortion-mitigating measures

Implication for corporate management:Anyone who does not systematically manage and demonstrate fairness risks regulatory sanctions.

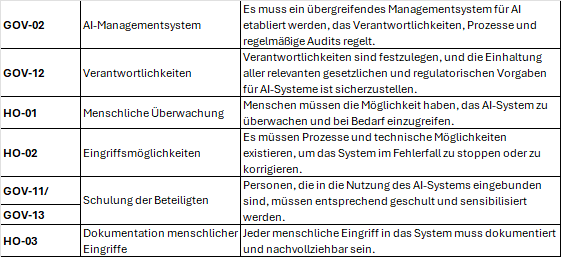

3. Governance & Responsibility: AI needs a new order

🧭 Responsibility must be clearly regulated throughout the life cycle of AI systems

The BSI catalog states, among other things:

- Every company has to have oneQuality Management System (QMS)for AI systems (GOV-02).

- Responsibilities along the AI life cycle must be documented (GOV-12).

- Manual monitoring processes carried out by humans are mandatory (HO-01 to HO-04).

This doesn’t just apply to in-house developments – the applications of third-party models must also fall under AI governance.

Strategic benefit:Organizations secure themselves from a regulatory perspective and maintain their good reputation when AI is viewed not only on a technical level, but also with a view to its impact on the organization.

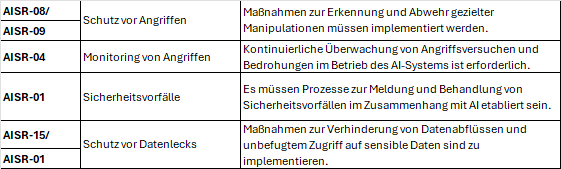

4. Security & Robustness: AI must not be a gateway for attackers

🔐 Technical security of AI applications is not just an IT issue, but also a governance issue

Adversarial attacksare

- Whitebox attacks:The attackers know the model and create targeted disruptive impulses.

- Black box attacks:The attackers have no model knowledge, but iterative testing to manipulate the output.

- Backdoor attacks:The model is manipulated to specifically deliver false results for certain triggers.

Recommended measuresin this regard

- Adversarial training

- Validate, clean, and filter input data

- Third-party penetration testing

Also to be highlighted in the catalogue

- Protection against model theft and output manipulation (AISR-17, AISR-18)

- Residual risk management as a mandatory task (AISR-19)

Many organizations underestimate AI-specific attack vectors and rely on generic IT security.

Significance for governance:Security checks must be expanded specifically for AI.

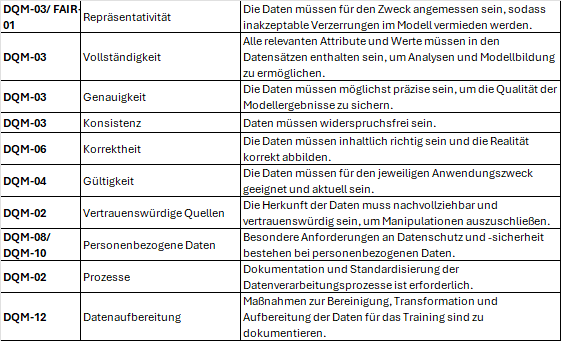

5. Data quality & management: The foundation of fairness

🧬 There is no AI strategy without a data strategy

- Data origin, processing and deletion must be fully documented (DQM-03, DQM-04, etc.).

- User rights (e.g. the “right to be forgotten”) must be technically implemented (DQM-09).

Strategic importance:Data quality is “only” the technical requirement for regulatory-compliant AI governance. The aim is to ensure that the basis for decisions is correct, fair and verifiable.

Strategic takeaways

- Managers don’t have to develop AI themselves – but they do have to be responsible for everything.

- Transparency, fairness and security are not add-ons, but rather mandatory components of regulatory-compliant AI use for high-risk AI systems.

- The BSI criteria set provides a concrete testing framework that can be strategically operationalized.

- Anyone who sets up AI governance today is not only investing in compliance - but also in future viability.

Conclusion & Next Steps

The discussion about AI in regulated industries must move away from the technical playground. With its catalog, the BSI provides a structured foundation that financial institutions can use to establish resilient AI governance.

“It is not the use of AI that is risky – but rather its uncontrolled use.”

Think about the following:

- Where do AI systems exist in my area of responsibility – directly or indirectly?

- How demonstrable are fairness, transparency and security measures today?

- Are there defined roles and review processes for AI governance?

📩Get active now:👉[Download the complete BSI test criteria catalog here]👉[Contact our experts for AI governance strategy consulting]

FAQ: Frequently asked questions about the implementation of the BSI criteria

1. What distinguishes AI-specific risks from classic IT risks?AI risks often arise from the learning behavior of the AI system, biases in training data, lack of explainability of the AI model or adversarial attacks - and AI risks are dynamic, not static.

2. What significance do MaRisk have in the context of AI risks?AI systems must be incorporated into model risk management. This requires the deposit of standards analogous to model validation obligations for banks (according to AT 4.3.3 MaRisk).

3. How can fairness be proven from a technical and regulatory perspective?Through bias measurement methods, defined threshold values and documented mitigation strategies – as required by the BSI.

4. What role does transparency play in regulatory requirements?Transparency is a basic requirement for testing, user acceptance and system traceability.

5. Are purchased AI models also affected by regulation?Yes - companies are responsible for the use of third-party components, particularly with regard to model transparency, fairness and security checks.

6. How do I establish an AI quality management system (QMS)?The BSI catalog lists concrete structural points: documentation, auditing cycles, responsibilities and traceability.

7. What does an exemplary project plan look like for the introduction of an AI system according to BSI criteria?

Scope & risk analysis

- Classification according to AI Act (e.g. high risk)

- Stakeholder identification

Governance

- Role distribution (QMS, responsible persons, audit)

- Selection of tools for documentation & monitoring

Transparency & fairness

- Consideration of the interpretability of the model, documentation

- Bias checks, mitigation strategy

Security & Testing

- Adversarial test

- Attack simulation, logging, response plans

Rollout & Continuous Monitoring

- Human monitoring, feedback processes

- Definition of annual review cycles and pen tests

📖 Also read:AI in finance: From black box risk to audit-proof strategic asset

📖 Also read:AI in finance: From black box risk to audit-proof strategic asset

Related articles

Continue exploring with related insights from our experts.

Generative AI in the enterprise: From pilot to production-scale rollout

How to take generative AI from pilot to production-scale rollout: the three deployment patterns, five proven use-case archetypes, the compliance layer aligned with EU AI Act and OWASP LLM Top 10 (2025), realistic cost math, and the operating model most pilots never build.

Building an AI roadmap: The 4-phase method for enterprise AI transformation

Build an AI roadmap in four phases: potential assessment, use-case selection, pilot, scale. With a 12-18 month timeline, scoring matrix, pitfall taxonomy, EU AI Act and ISO 42001 embedding, and an executive FAQ.

What are the 4 Types of AI? The Complete Guide

The 4 types of AI per Arend Hintze (2016): Reactive Machines, Limited Memory, Theory of Mind, and Self-Aware AI. With examples, EU AI Act mapping and what this means for today's enterprise AI use.