Transparent AI Systems for Trust and Compliance

Explainable AI (XAI)

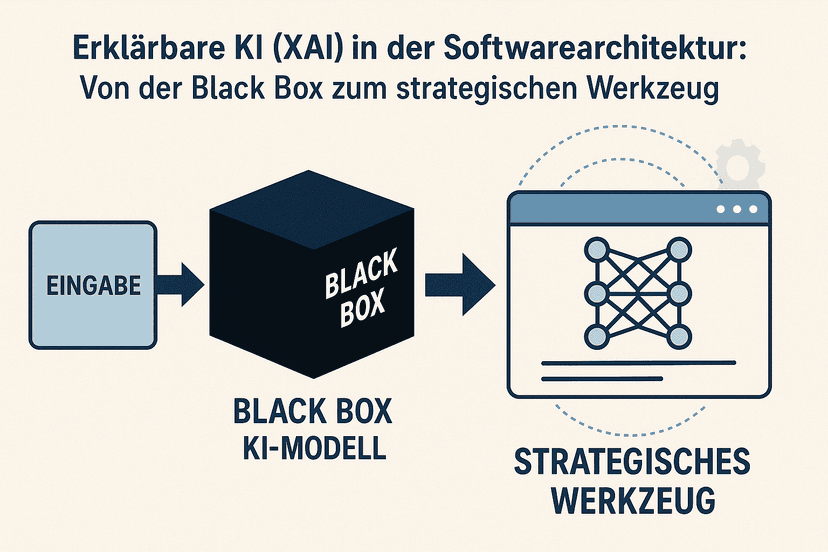

KI-Entscheidungen müssen erkläbar, nachvollziehbar und auditierbar sein – gefordert von DSGVO Art. 22 und EU AI Act. ADVISORI implementiert Explainable-AI-Methoden (SHAP, LIME, Counterfactual Explanations), die Vertrauen schaffen, regulatorische Transparenzpflichten erfüllen und Ihr KI-System audit-ready machen.

- ✓Transparent AI decisions for trust and acceptance

- ✓GDPR and EU AI Act compliant implementation

- ✓Audit-ready documentation and traceability

- ✓Risk minimization through interpretable AI models

Ihr Erfolg beginnt hier

Bereit für den nächsten Schritt?

Schnell, einfach und absolut unverbindlich.

Zur optimalen Vorbereitung:

- Ihr Anliegen

- Wunsch-Ergebnis

- Bisherige Schritte

Oder kontaktieren Sie uns direkt:

Zertifikate, Partner und mehr...

Explainable AI (XAI)

Our Strengths

- Leading expertise in XAI methods and implementation

- GDPR and EU AI Act compliant transparency frameworks

- Safety-first approach with IP protection and data security

- Strategic C-level consulting for sustainable XAI adoption

⚠

Expert Insight

Explainable AI is the key to sustainable AI success. Transparent systems not only build stakeholder trust but also enable better decisions, reduce risks, and proactively meet regulatory requirements.

ADVISORI in Zahlen

11+

Jahre Erfahrung

120+

Mitarbeiter

520+

Projekte

We develop an individual XAI strategy with you that optimally combines technical excellence with regulatory compliance and business requirements.

Our Approach

1

Phase 1

Comprehensive analysis of your AI systems and transparency requirements

2

Phase 2

Development of a tailored XAI strategy and roadmap

3

Phase 3

Implementation of interpretable models and explainability tools

4

Phase 4

Establishment of governance frameworks for traceable AI

5

Phase 5

Continuous monitoring, optimization, and compliance assurance

"Explainable AI is not just a technical requirement but a strategic enabler for trustworthy AI adoption. Our XAI implementations create transparency without compromising performance, enabling companies to develop AI systems that are both powerful and traceable – a decisive competitive advantage in a regulated world."

Asan Stefanski

Head of Digital Transformation

Expertise & Erfahrung:

11+ Jahre Erfahrung, Studium Angewandte Informatik, Strategische Planung und Leitung von KI-Projekten, Cyber Security, Secure Software Development, AI

Unsere Dienstleistungen

Wir bieten Ihnen maßgeschneiderte Lösungen für Ihre digitale Transformation

XAI Strategy & Transparency Assessment

Comprehensive evaluation of your AI systems and development of a strategic roadmap for explainable AI.

- Analysis of existing AI models for interpretability

- Identification of transparency requirements and use cases

- Development of XAI roadmap and implementation strategy

- Stakeholder analysis and transparency requirements management

Interpretable ML Model Development

Development and implementation of Machine Learning models with built-in interpretability.

- Design of interpretable model architectures

- Implementation of LIME, SHAP, and other XAI methods

- Performance optimization without transparency loss

- Validation and testing of explainability features

Explainability Framework Implementation

Building comprehensive frameworks for systematic explainability of your AI systems.

- Development of explainability standards and processes

- Integration of XAI tools into existing AI pipelines

- Automated explanation generation and documentation

- User interface design for AI transparency

AI Governance for Transparency

Establishment of governance structures for traceable and responsible AI usage.

- Development of XAI governance guidelines

- Establishment of transparency KPIs and monitoring

- Training teams in XAI methods and tools

- Change management for transparent AI culture

Compliance Monitoring & Audit Support

Continuous monitoring of XAI compliance and support for audits and regulatory inquiries.

- GDPR and EU AI Act compliance monitoring

- Audit trail documentation for AI decisions

- Regulatory reporting and documentation

- External audit support and compliance validation

XAI Tool Integration & Technology Consulting

Consulting and integration of state-of-the-art XAI tools and technologies into your existing IT landscape.

- Evaluation and selection of suitable XAI tools

- Integration into existing ML-Ops and AI pipelines

- Custom XAI solution development for special requirements

- Performance optimization and scaling of XAI systems

Unsere Kompetenzen im Bereich KI - Künstliche Intelligenz

Wählen Sie den passenden Bereich für Ihre Anforderungen

AI Governance Beratung

Ihre Mitarbeiter nutzen bereits KI. Im Marketing schreibt ChatGPT Texte mit Kundendaten. Im Vertrieb analysiert Copilot vertrauliche Angebote. In der Buchhaltung prüft eine KI Rechnungen. Die Geschäftsführung? Weiß in den meisten Fällen nichts davon. Kein Überblick, keine Regeln, keine Kontrolle. Das ist der Normalzustand in deutschen Unternehmen — und es ist eine tickende Zeitbombe.

Absicherung von KI-Systemen

Schützen Sie Ihre KI-Systeme mit maßgeschneiderten Sicherheitsmaßnahmen. ADVISORI sichert Ihre AI-Infrastruktur gegen Adversarial Attacks, Data Poisoning und Model Extraction – EU AI Act-konform und DSGVO-ready.

Adversarial KI Attacks

Schützen Sie Ihre KI-Modelle vor Adversarial Attacks mit spezialisierten Abwehrstrategien. ADVISORI analysiert Ihre KI-Systeme auf adversarielle Schwachstellen und implementiert robuste Schutzmaßnahmen – EU AI Act-konform und DSGVO-ready.

Aufbau interner KI-Kompetenzen

Bauen Sie KI-Kompetenzen systematisch auf - von der Fuehrungsebene bis zu operativen Teams. ADVISORI entwickelt Ihre KI-Schulungsstrategie, ein KI Center of Excellence und EU AI Act-konforme Talentprogramme für nachhaltige Wettbewerbsvorteile.

Azure OpenAI Sicherheit

Nutzen Sie die Kraft von Azure OpenAI mit unserem Safety-First-Ansatz. Wir implementieren sichere, DSGVO-konforme Cloud-AI-Lösungen, die Ihr geistiges Eigentum schützen und gleichzeitig die volle Innovationskraft von Microsoft Azure OpenAI erschließen.

Beratung KI-Sicherheit

Schuetzen Sie Ihr Unternehmen vor KI-spezifischen Risiken mit professioneller KI-Sicherheitsberatung. ADVISORI entwickelt EU AI Act-konforme Security Frameworks, schuetzt vor Adversarial Attacks und Data Poisoning und sichert Ihre KI-Systeme DSGVO-konform.

DSGVO für KI

Implementieren Sie Künstliche Intelligenz rechtskonform und datenschutzfreundlich. Unsere Experten unterstützen Sie bei der DSGVO-konformen Gestaltung von AI-Systemen, von der Konzeption bis zur Umsetzung.

DSGVO-konforme KI-Lösungen

Künstliche Intelligenz unter vollständiger DSGVO-Compliance: Privacy-by-Design-Implementierung, automatisierte Entscheidungsfindung nach Art. 22 DSGVO, Datenschutz-Folgenabschätzung (DSFA) für KI-Systeme und Vorbereitung auf den EU AI Act. ADVISORI macht Ihre KI rechtskonform, erklärbar und auditbereit.

Data Poisoning KI

Data Poisoning Angriffe vergiften KI-Modelle durch manipulierte Trainingsdaten – oft unbemerkt bis zum Produktiveinsatz. ADVISORI erkennt und neutralisiert diese Bedrohungen mit forensischer Datenanalyse, Anomalie-Erkennung und Safety-by-Design-Architekturen. Schützen Sie Ihre KI-Investitionen und erfüllen Sie die Sicherheitsanforderungen des EU AI Act.

Datenintegration für KI

Ohne hochwertige, integrierte Daten kein leistungsstarkes KI-Modell. ADVISORI entwickelt DSGVO-konforme Datenpipelines und Enterprise Data Architectures, die Ihre Rohdaten in auditierbare, KI-gerechte Datensaetze verwandeln. Von der Datenquelle bis zum trainierten Modell - sicher, skalierbar und compliant.

Datenlecks durch LLMs verhindern

Schützen Sie Ihr Unternehmen vor Datenlecks durch Large Language Models. Unsere Safety-First-Methodik gewährleistet DSGVO-konforme LLM-Implementierungen mit umfassendem Schutz Ihres geistigen Eigentums und sensibler Unternehmensdaten.

Datenschutz bei KI

KI-Systeme verarbeiten personenbezogene Daten in nie dagewesenem Umfang. ADVISORI implementiert Datenschutz by Design für Ihre KI-Projekte: DSGVO-konforme Datenarchitekturen, risikobasierte Datenschutz-Folgenabschaetzungen und EU AI Act-Compliance. Nutzen Sie das Potenzial von KI ohne rechtliche Risiken.

Datenschutz für KI

Setzen Sie Künstliche Intelligenz rechtssicher ein. Unsere KI-Datenschutzexperten implementieren Privacy-by-Design-Architekturen, sichern personenbezogene Daten in AI-Systemen und begleiten Sie durch alle DSGVO-Anforderungen und EU AI Act Pflichten – ohne Kompromisse bei der KI-Performance.

Datensicherheit für KI

Schützen Sie KI-Trainingsdaten, Modelle und Inferenz-Pipelines vor Angriffen und Datenverlust. Unsere Datensicherheitsexperten implementieren technische Schutzmaßnahmen für den gesamten ML-Lebenszyklus — von der Datensammlung über das Training bis zum produktiven Einsatz Ihrer KI-Systeme.

Datenstrategie für KI

Entwickeln Sie eine zukunftssichere Datenstrategie, die Ihre KI-Initiativen zum Erfolg führt. Unsere strategischen Data Governance-Frameworks schaffen die Grundlage für leistungsstarke AI-Systeme und nachhaltigen Geschäftserfolg.

Deployment von KI-Modellen

Bringen Sie Ihre KI-Modelle zuverlässig und skalierbar in die Produktion. Unsere MLOps-Experten implementieren robuste Deployment-Pipelines, automatisieren CI/CD-Prozesse für KI-Modelle und gewährleisten kontinuierliches Monitoring — damit Ihre KI-Systeme performant, DSGVO-konform und EU AI Act-compliant betrieben werden.

EU AI Act Compliance

Seit Februar 2025 gelten die ersten Verbote des EU AI Acts. Stellen Sie sich vor: Montagmorgen, Ihr wichtigster Kunde meldet sich – seine Bank hat gerade eine Warnung der Aufsichtsbehörde erhalten. Grund: Das KI-System für Kreditentscheidungen erfüllt nicht die EU AI Act Anforderungen. Potenzielle Strafe: 35 Millionen Euro oder 7% des Jahresumsatzes. Was als Effizienz-Tool gedacht war, wird zur existenziellen Bedrohung.

Erklärbare KI

Schaffen Sie Vertrauen und Compliance mit transparenten KI-Systemen. Unsere Explainable AI (XAI) Lösungen machen komplexe Algorithmen nachvollziehbar und ermöglichen fundierte Geschäftsentscheidungen bei gleichzeitiger Erfüllung regulatorischer Anforderungen.

Gefahren durch KI

KI birgt erhebliche Gefahren: von Adversarial Attacks und Data Poisoning über KI-Halluzinationen bis zu Datenschutzverstößen und EU AI Act-Risiken. ADVISORI identifiziert, bewertet und minimiert KI-Gefahren mit einem Safety-First-Ansatz – für regulatorisch sichere und verantwortungsvolle KI-Implementierung.

KI Computer Vision

Computer Vision ist eine der am schnellsten wachsenden KI-Anwendungen. Wir entwickeln und implementieren DSGVO- und AI-Act-konforme Computer Vision Loesungen für Unternehmen.

Häufig gestellte Fragen zur Explainable AI (XAI)

Why is Explainable AI more than just a technical requirement for the C-Suite, and how does ADVISORI position XAI as a strategic competitive advantage?

For C-level executives, Explainable AI represents a fundamental paradigm shift from pure technology adoption to trust-based, sustainable AI transformation. In an era of increasing regulation and rising stakeholder expectations, XAI is not just a compliance requirement but a strategic enabler for sustainable business innovation and risk minimization.

🎯 Strategic Imperatives for Leadership:

•

Trust building and stakeholder acceptance: Transparent AI systems create trust among customers, investors, and regulatory authorities, which is essential for long-term AI adoption.

•

Risk minimization and compliance security: Explainable AI proactively reduces regulatory risks and creates audit readiness for EU AI Act and GDPR requirements.

•

Business value through transparency: Traceable AI decisions enable better business decisions and optimized processes through understandable insights.

•

Competitive differentiation: Companies with transparent AI systems position themselves as responsible innovators in the market.

🛡 ️ The ADVISORI Approach for Strategic XAI Adoption:

•

Business-first perspective: We develop XAI strategies that primarily support business objectives and use transparency as a value creation lever.

How do we quantify the business value of Explainable AI, and what direct impact does ADVISORI's XAI implementation have on enterprise value and risk minimization?

Investment in Explainable AI from ADVISORI is a strategic value creation lever that generates both direct cost savings and long-term value increases. Business value manifests in reduced compliance costs, increased stakeholder trust, and improved business decisions through traceable AI insights.

💰 Direct Impact on Enterprise Value and Performance:

•

Compliance cost savings: Proactive XAI implementation significantly reduces future audit costs and avoids costly regulatory violations.

•

Risk minimization and insurance benefits: Transparent AI systems reduce operational risks and can lead to more favorable insurance conditions.

•

Improved decision quality: Traceable AI insights enable more precise business decisions and optimized resource allocation.

•

Stakeholder trust and market positioning: Transparent AI practices sustainably strengthen trust among investors, customers, and partners.

📈 Strategic Value Drivers and Market Advantages:

•

Regulatory future-proofing: XAI systems are better prepared for future regulatory changes and reduce adaptation costs.

•

Customer retention through transparency: Traceable AI decisions increase customer trust and loyalty, leading to higher customer lifetime values.

The EU AI Act and GDPR set new standards for AI transparency. How does ADVISORI ensure that our XAI strategy is not only compliant but also future-proof for upcoming regulations?

In a rapidly evolving regulatory landscape, proactive XAI compliance is not just a legal necessity but a strategic competitive advantage. ADVISORI pursues a forward-looking approach that not only meets current EU AI Act and GDPR requirements but also anticipates future regulatory developments and positions your company for a changing legal landscape.

🔄 Adaptive Compliance Strategy as Core Principle:

•

Continuous regulatory monitoring: We actively track EU AI Act implementation development, GDPR updates, and international XAI standards to keep your systems consistently compliant.

•

Future-proof XAI architecture: Our explainability frameworks are based on flexible, modular architectures that can quickly adapt to new transparency requirements.

•

Proactive governance integration: Establishment of robust XAI governance structures that go beyond minimum requirements and serve as best practice standards.

•

Comprehensive documentation and audit trails: Systematic capture of all AI decision processes for complete transparency and regulatory readiness.

How does ADVISORI transform Explainable AI from a compliance cost factor to a strategic innovation driver, and what new business opportunities does transparent AI open up?

ADVISORI positions Explainable AI not as a regulatory burden but as a fundamental innovation catalyst and business transformation enabler. Our approach transforms XAI investments into strategic growth engines that enable new business models, strengthen customer trust, and create sustainable competitive advantages while proactively meeting compliance requirements.

🚀 From Compliance to Business Innovation:

•

New service models through transparency: XAI enables completely new business models, from explainable AI-as-a-Service offerings to transparency premium services.

•

Customer trust as differentiation factor: Traceable AI decisions create unique value propositions and enable premium positioning in the market.

•

Data monetization through insights: Transparent AI systems generate valuable, traceable insights that can be marketed as standalone products.

•

Partnership ecosystems: XAI competence enables strategic alliances with other companies that need transparency standards.

💡 ADVISORI's Innovation Framework for XAI:

•

Business model innovation through transparency: Development of new value creation models that use XAI as a core component and unlock market opportunities.

What are the key technical challenges in implementing explainable AI systems, and how does ADVISORI ensure both interpretability and performance optimization?

Implementing explainable AI systems presents unique technical challenges that require balancing interpretability with performance, scalability, and accuracy. ADVISORI addresses these challenges through sophisticated architectural approaches that maintain model effectiveness while ensuring transparency and traceability throughout the AI decision-making process.

⚖ ️ Balancing Performance and Interpretability:

•

Model architecture optimization: We design hybrid architectures that combine high-performing models with interpretable components, ensuring transparency without sacrificing accuracy.

•

Selective explainability implementation: Strategic application of explainability methods where they provide maximum value while maintaining computational efficiency.

•

Performance benchmarking: Continuous monitoring of model performance metrics to ensure explainability enhancements do not compromise business outcomes.

•

Adaptive transparency levels: Implementation of dynamic explainability that adjusts detail levels based on use case requirements and stakeholder needs.

🔧 ADVISORI's Technical Excellence Framework:

•

Advanced XAI methodologies: Implementation of cutting-edge techniques including LIME, SHAP, attention mechanisms, and gradient-based explanations tailored to specific model types.

•

Scalable explanation infrastructure: Development of robust systems that can generate explanations at enterprise scale without performance degradation.

How does ADVISORI implement GDPR-compliant explainable AI systems that meet the 'right to explanation' requirements while protecting intellectual property?

GDPR compliance in explainable AI requires sophisticated technical and legal frameworks that balance transparency obligations with intellectual property protection. ADVISORI implements comprehensive solutions that meet regulatory requirements while safeguarding proprietary algorithms and business-critical information.

🔒 Privacy-Preserving Explainability Architecture:

•

Differential privacy integration: Implementation of mathematical frameworks that provide meaningful explanations while protecting individual data points and model internals.

•

Federated explanation systems: Development of distributed explainability that enables transparency without centralizing sensitive data or exposing proprietary algorithms.

•

Selective information disclosure: Technical mechanisms that provide sufficient explanation detail for GDPR compliance while protecting competitive advantages.

•

Anonymization and aggregation: Advanced techniques that deliver insights about AI decision-making without revealing specific data patterns or model vulnerabilities.

⚖ ️ Legal and Technical Compliance Framework:

•

Right to explanation implementation: Technical systems that automatically generate human-readable explanations for automated decision-making as required by GDPR Article 22.

•

Audit trail generation: Comprehensive logging systems that document AI decision processes for regulatory review while maintaining security protocols.

What specific XAI methodologies and tools does ADVISORI implement, and how do you ensure explanation quality and consistency across different AI models and use cases?

ADVISORI employs a comprehensive suite of state-of-the-art XAI methodologies, carefully selected and customized for specific model types and business contexts. Our approach ensures consistent, high-quality explanations across diverse AI applications while maintaining technical rigor and business relevance.

🔬 Advanced XAI Methodology Portfolio:

•

Model-agnostic techniques: Implementation of LIME and SHAP for universal applicability across different model types, providing consistent explanation frameworks regardless of underlying algorithms.

•

Model-specific approaches: Deployment of specialized methods like attention visualization for transformers, gradient-based explanations for neural networks, and feature importance for tree-based models.

•

Counterfactual explanations: Development of systems that show how input changes would affect outcomes, providing actionable insights for decision-makers.

•

Causal inference integration: Implementation of causal AI methods that explain not just correlations but actual cause-effect relationships in model decisions.

📊 Explanation Quality Assurance Framework:

•

Multi-metric evaluation: Comprehensive assessment using faithfulness, stability, comprehensibility, and actionability metrics to ensure explanation reliability.

•

Human-in-the-loop validation: Integration of domain expert feedback to validate explanation accuracy and business relevance.

How does ADVISORI handle the scalability challenges of explainable AI in enterprise environments, and what infrastructure is required for large-scale XAI deployment?

Enterprise-scale explainable AI deployment requires sophisticated infrastructure and architectural considerations that can handle high-volume explanation generation while maintaining performance and cost efficiency. ADVISORI designs scalable XAI systems that grow with business needs and integrate seamlessly with existing enterprise infrastructure.

🏗 ️ Scalable XAI Architecture Design:

•

Distributed explanation processing: Implementation of microservices architectures that can scale explanation generation across multiple servers and cloud environments.

•

Caching and optimization strategies: Intelligent caching of frequently requested explanations and pre-computation of common explanation scenarios to reduce latency.

•

Load balancing and resource management: Dynamic allocation of computational resources based on explanation demand and complexity requirements.

•

Edge computing integration: Deployment of explanation capabilities at edge locations for reduced latency and improved user experience.

☁ ️ Cloud-Native XAI Infrastructure:

•

Container orchestration: Kubernetes-based deployment strategies that enable automatic scaling of explanation services based on demand.

•

Serverless explanation functions: Implementation of event-driven explanation generation that scales automatically and optimizes costs.

How does ADVISORI implement explainable AI solutions for highly regulated industries like finance, healthcare, and automotive, where transparency is critical for safety and compliance?

Highly regulated industries require specialized explainable AI approaches that meet stringent safety, compliance, and transparency requirements. ADVISORI develops industry-specific XAI solutions that address unique regulatory frameworks while maintaining the highest standards of accuracy and reliability for mission-critical applications.

🏥 Healthcare and Life Sciences XAI:

•

Clinical decision support transparency: Implementation of explainable AI systems for medical diagnosis and treatment recommendations that provide clear reasoning for healthcare professionals.

•

Regulatory compliance for medical devices: Development of XAI solutions that meet FDA and EMA requirements for AI-based medical devices and diagnostic tools.

•

Patient safety and liability protection: Creation of audit trails and explanation systems that support clinical decision-making while protecting against malpractice risks.

•

Ethical AI for healthcare: Implementation of bias detection and fairness mechanisms to ensure equitable treatment recommendations across diverse patient populations.

🏦 Financial Services and Banking XAI:

•

Credit scoring transparency: Development of explainable credit risk models that meet fair lending regulations and provide clear reasoning for loan decisions.

What role does explainable AI play in building stakeholder trust and user acceptance, and how does ADVISORI design XAI interfaces for different user groups?

Building stakeholder trust through explainable AI requires sophisticated user experience design that delivers appropriate levels of transparency to different audiences. ADVISORI creates multi-layered explanation systems that provide relevant insights to technical teams, business users, regulators, and end customers while maintaining usability and comprehension.

👥 Stakeholder-Specific Explanation Design:

•

Executive dashboards: High-level explanation interfaces that provide strategic insights about AI performance, risk indicators, and business impact without overwhelming technical detail.

•

Technical team interfaces: Detailed explanation tools for data scientists and engineers that provide deep insights into model behavior, feature importance, and performance metrics.

•

End-user explanations: Simple, intuitive explanations for customers and end-users that build confidence in AI-driven decisions without requiring technical expertise.

•

Regulatory reporting interfaces: Comprehensive explanation systems designed specifically for audit and regulatory review with complete documentation and traceability.

🎨 User Experience Excellence in XAI:

•

Progressive disclosure: Interface design that allows users to drill down from high-level explanations to detailed technical insights based on their needs and expertise.

How does ADVISORI address the challenge of explaining complex ensemble models and deep learning systems while maintaining accuracy and business value?

Explaining complex AI systems like ensemble models and deep neural networks requires sophisticated approaches that balance technical accuracy with business comprehension. ADVISORI employs advanced explanation techniques that preserve model performance while providing meaningful insights into complex decision-making processes.

🧠 Deep Learning Explainability Strategies:

•

Attention mechanism visualization: Implementation of attention maps and saliency techniques that highlight which input features most influence neural network decisions.

•

Layer-wise relevance propagation: Advanced techniques that trace decision-making through neural network layers to identify critical pathways and feature interactions.

•

Gradient-based explanations: Implementation of gradient analysis methods that show how small input changes affect model outputs and decision boundaries.

•

Surrogate model approaches: Development of simpler, interpretable models that approximate complex neural network behavior for explanation purposes.

🔗 Ensemble Model Transparency:

•

Individual model contribution analysis: Breakdown of how different models within an ensemble contribute to final decisions and identification of consensus patterns.

What are the key performance indicators and metrics ADVISORI uses to measure the effectiveness of explainable AI implementations, and how do you ensure continuous improvement?

Measuring explainable AI effectiveness requires comprehensive metrics that evaluate both technical performance and business impact. ADVISORI implements sophisticated measurement frameworks that track explanation quality, user satisfaction, compliance effectiveness, and business value to ensure continuous improvement of XAI systems.

📊 Technical Explanation Quality Metrics:

•

Faithfulness measurement: Quantitative assessment of how accurately explanations represent actual model behavior through perturbation testing and correlation analysis.

•

Stability evaluation: Measurement of explanation consistency across similar inputs and model updates to ensure reliable and predictable explanation behavior.

•

Completeness assessment: Evaluation of whether explanations capture all significant factors influencing model decisions without overwhelming users with irrelevant details.

•

Computational efficiency tracking: Monitoring of explanation generation time and resource usage to ensure scalable performance in production environments.

👤 User Experience and Adoption Metrics:

•

User comprehension testing: Regular assessment of how well different user groups understand and can act upon provided explanations through surveys and usability studies.

What is ADVISORI's approach to change management and organizational adoption when implementing explainable AI systems across enterprise teams?

Successful explainable AI implementation requires comprehensive change management that addresses technical, cultural, and organizational challenges. ADVISORI develops holistic adoption strategies that ensure smooth transition to transparent AI practices while building organizational capabilities and stakeholder buy-in across all levels of the enterprise.

🎯 Strategic Change Management Framework:

•

Stakeholder mapping and engagement: Comprehensive identification of all affected parties from technical teams to end-users, with tailored communication and training strategies for each group.

•

Cultural transformation planning: Development of organizational culture initiatives that promote transparency, accountability, and trust in AI decision-making processes.

•

Executive sponsorship cultivation: Building strong C-level support and championship for explainable AI initiatives to ensure adequate resources and organizational priority.

•

Resistance management strategies: Proactive identification and mitigation of potential resistance points through education, involvement, and addressing specific concerns.

📚 Comprehensive Training and Capability Building:

•

Role-specific training programs: Customized education for different organizational roles from data scientists to business users, ensuring everyone understands their part in the XAI ecosystem.

How does ADVISORI handle the integration of explainable AI with existing enterprise systems, data pipelines, and ML operations workflows?

Enterprise XAI integration requires sophisticated technical planning that seamlessly incorporates explainability into existing infrastructure without disrupting critical business operations. ADVISORI designs integration strategies that leverage current investments while enhancing them with transparency capabilities that scale with organizational needs.

🔧 Technical Integration Architecture:

•

API-first design principles: Development of explainability services with robust APIs that integrate seamlessly with existing applications, dashboards, and business intelligence tools.

•

Microservices architecture: Implementation of modular XAI components that can be deployed independently and scaled based on demand without affecting core business systems.

•

Legacy system compatibility: Creation of integration layers that enable explainability for existing AI models and systems without requiring complete rebuilds or replacements.

•

Real-time and batch processing support: Flexible architecture that supports both immediate explanation needs and large-scale batch explanation generation for historical analysis.

📊 Data Pipeline and MLOps Integration:

•

CI/CD pipeline enhancement: Integration of explainability testing and validation into existing continuous integration and deployment workflows for AI models.

What are the cost considerations and ROI models for explainable AI implementation, and how does ADVISORI help organizations justify XAI investments?

Explainable AI investment requires careful financial planning and clear ROI demonstration to secure organizational support and resources. ADVISORI develops comprehensive cost-benefit models that quantify both direct and indirect value creation while providing realistic implementation budgets and timeline expectations for sustainable XAI adoption.

💰 Comprehensive Cost Analysis Framework:

•

Implementation cost breakdown: Detailed analysis of technology costs, professional services, training expenses, and infrastructure requirements for realistic budget planning.

•

Ongoing operational expenses: Assessment of maintenance, support, and continuous improvement costs to ensure sustainable long-term XAI operations.

•

Hidden cost identification: Recognition of indirect costs such as change management, productivity impacts during transition, and potential system integration challenges.

•

Cost optimization strategies: Development of phased implementation approaches that spread costs over time while delivering incremental value to the organization.

📈 ROI Quantification and Value Demonstration:

•

Risk reduction valuation: Quantification of reduced regulatory, operational, and reputational risks through improved AI transparency and compliance capabilities.

How does ADVISORI ensure long-term sustainability and evolution of explainable AI systems as technology and regulatory requirements change?

Long-term XAI sustainability requires forward-thinking architecture and governance that can adapt to evolving technology landscapes and regulatory environments. ADVISORI designs explainable AI systems with built-in flexibility and evolution capabilities that protect organizational investments while enabling continuous improvement and adaptation to changing requirements.

🔮 Future-Proofing Technology Architecture:

•

Modular and extensible design: Implementation of XAI systems with flexible architectures that can incorporate new explanation methods and technologies as they emerge.

•

Technology abstraction layers: Creation of interfaces that separate explanation logic from underlying implementation, enabling technology upgrades without system overhaul.

•

Open standards adoption: Use of industry-standard protocols and formats that ensure compatibility with future tools and technologies in the XAI ecosystem.

•

Cloud-native and containerized deployment: Implementation strategies that leverage modern infrastructure patterns for scalability, maintainability, and technology evolution.

📋 Adaptive Governance and Compliance Framework:

•

Regulatory monitoring and adaptation: Establishment of processes to track regulatory changes and quickly adapt XAI systems to meet new compliance requirements.

How does ADVISORI address bias detection and fairness considerations in explainable AI systems, and what methods are used to ensure equitable AI decision-making?

Bias detection and fairness in explainable AI requires sophisticated analytical frameworks that identify, measure, and mitigate unfair treatment across different demographic groups and use cases. ADVISORI implements comprehensive fairness assessment methodologies that ensure AI systems make equitable decisions while providing clear explanations for fairness-related choices and interventions.

⚖ ️ Comprehensive Bias Detection Framework:

•

Multi-dimensional bias analysis: Systematic evaluation of AI systems across multiple protected characteristics including race, gender, age, socioeconomic status, and other relevant demographic factors.

•

Statistical parity assessment: Quantitative measurement of outcome differences across groups to identify potential discriminatory patterns in AI decision-making.

•

Individual fairness evaluation: Assessment of whether similar individuals receive similar treatment from AI systems, regardless of group membership.

•

Intersectional bias detection: Advanced analysis that identifies bias affecting individuals who belong to multiple protected groups simultaneously.

🔍 Fairness-Aware Explainability Methods:

•

Counterfactual fairness explanations: Development of explanations that show how decisions would change if sensitive attributes were different, helping identify unfair dependencies.

What role does human-AI collaboration play in ADVISORI's explainable AI implementations, and how do you design systems that enhance rather than replace human decision-making?

Human-AI collaboration in explainable AI requires careful design that leverages the strengths of both human intuition and AI capabilities while maintaining appropriate human oversight and control. ADVISORI develops collaborative systems that augment human decision-making through transparent AI assistance while preserving human agency and accountability in critical decisions.

🤝 Human-Centered XAI Design Principles:

•

Complementary capability mapping: Identification of tasks where AI excels versus areas where human judgment is superior, designing systems that leverage both strengths effectively.

•

Cognitive load optimization: Development of explanation interfaces that provide relevant information without overwhelming human decision-makers with excessive detail or complexity.

•

Trust calibration mechanisms: Implementation of systems that help humans develop appropriate levels of trust in AI recommendations through transparent performance indicators.

•

Human agency preservation: Design of systems that maintain human control over final decisions while providing AI insights to inform and improve human judgment.

How does ADVISORI handle explainable AI for real-time and edge computing scenarios where computational resources and latency constraints are critical?

Real-time and edge computing explainable AI presents unique challenges that require optimized architectures balancing explanation quality with performance constraints. ADVISORI develops lightweight XAI solutions that provide meaningful transparency within strict computational and latency budgets while maintaining the reliability required for time-critical applications.

⚡ Performance-Optimized XAI Architecture:

•

Lightweight explanation algorithms: Implementation of computationally efficient explanation methods that provide meaningful insights without significant performance overhead.

•

Pre-computed explanation templates: Development of explanation frameworks that pre-calculate common explanation patterns to reduce real-time computational requirements.

•

Hierarchical explanation delivery: Multi-level explanation systems that provide immediate high-level insights with optional detailed explanations available on demand.

•

Edge-optimized model architectures: Design of AI models that are inherently more interpretable while maintaining performance suitable for edge deployment.

🔧 Resource-Constrained Implementation Strategies:

•

Explanation caching and reuse: Intelligent caching systems that store and reuse explanations for similar scenarios to reduce computational overhead.

•

Adaptive explanation complexity: Dynamic adjustment of explanation detail based on available computational resources and user requirements.

What emerging trends and future developments in explainable AI does ADVISORI anticipate, and how do you prepare organizations for the next generation of XAI technologies?

The explainable AI landscape is rapidly evolving with emerging technologies and methodologies that will reshape how organizations implement and benefit from transparent AI systems. ADVISORI stays at the forefront of XAI innovation while helping organizations prepare for future developments through forward-thinking strategies and adaptable architectures.

🔮 Emerging XAI Technology Trends:

•

Causal explainable AI: Evolution toward explanation methods that reveal true cause-effect relationships rather than just correlations, providing deeper insights into AI decision-making processes.

•

Multimodal explanation systems: Development of explanation methods that work across text, images, audio, and other data types, providing comprehensive transparency for complex AI applications.

•

Automated explanation generation: Advanced systems that automatically generate human-readable explanations without manual intervention, scaling explanation capabilities across large organizations.

•

Quantum-enhanced explainability: Exploration of quantum computing applications for complex explanation generation and analysis of high-dimensional AI systems.

🚀 Next-Generation XAI Capabilities:

•

Conversational explanation interfaces: Development of natural language explanation systems that allow users to ask questions and receive contextual answers about AI decisions.

Erfolgsgeschichten

Entdecken Sie, wie wir Unternehmen bei ihrer digitalen Transformation unterstützen

Generative KI in der Fertigung

Bosch

KI-Prozessoptimierung für bessere Produktionseffizienz

Fallstudie

Ergebnisse

Reduzierung der Implementierungszeit von AI-Anwendungen auf wenige Wochen

Verbesserung der Produktqualität durch frühzeitige Fehlererkennung

Steigerung der Effizienz in der Fertigung durch reduzierte Downtime

AI Automatisierung in der Produktion

Festo

Intelligente Vernetzung für zukunftsfähige Produktionssysteme

Fallstudie

Ergebnisse

Verbesserung der Produktionsgeschwindigkeit und Flexibilität

Reduzierung der Herstellungskosten durch effizientere Ressourcennutzung

Erhöhung der Kundenzufriedenheit durch personalisierte Produkte

KI-gestützte Fertigungsoptimierung

Siemens

Smarte Fertigungslösungen für maximale Wertschöpfung

Fallstudie

Ergebnisse

Erhebliche Steigerung der Produktionsleistung

Reduzierung von Downtime und Produktionskosten

Verbesserung der Nachhaltigkeit durch effizientere Ressourcennutzung

Digitalisierung im Stahlhandel

Klöckner & Co

Digitalisierung im Stahlhandel

Fallstudie

Ergebnisse

Über 2 Milliarden Euro Umsatz jährlich über digitale Kanäle

Ziel, bis 2022 60% des Umsatzes online zu erzielen

Verbesserung der Kundenzufriedenheit durch automatisierte Prozesse

Lassen Sie uns

Zusammenarbeiten!

Ist Ihr Unternehmen bereit für den nächsten Schritt in die digitale Zukunft? Kontaktieren Sie uns für eine persönliche Beratung.

Ihr strategischer Erfolg beginnt hier

Unsere Kunden vertrauen auf unsere Expertise in digitaler Transformation, Compliance und Risikomanagement

Bereit für den nächsten Schritt?

Vereinbaren Sie jetzt ein strategisches Beratungsgespräch mit unseren Experten

30 Minuten • Unverbindlich • Sofort verfügbar

Zur optimalen Vorbereitung Ihres Strategiegesprächs:

Ihre strategischen Ziele und Herausforderungen

Gewünschte Geschäftsergebnisse und ROI-Erwartungen

Aktuelle Compliance- und Risikosituation

Stakeholder und Entscheidungsträger im Projekt

Bevorzugen Sie direkten Kontakt?

Direkte Hotline für Entscheidungsträger

Strategische Anfragen per E-Mail

Detaillierte Projektanfrage

Für komplexe Anfragen oder wenn Sie spezifische Informationen vorab übermitteln möchten